A Safe Harbor For Abusers. A Nightmare For Survivors.

Why is X on the 2026 Dirty Dozen List?

X has become the front page for sexual abuse online, amplifying exploitation instead of preventing it. Not only did the platform decide to take “no action” on child sexual abuse material, but it continues to facilitate child abuse, image-based sexual abuse, AI deepfake pornography, prostitution / sex trafficking, and more. Its policies and lack of enforcement make X a safe harbor for abusers and a nightmare for survivors.

The story of John Doe is one of heartbreak, resilience, and a fight for justice that could reshape the legal landscape for holding tech companies accountable.

The Problem

The story of John Doe is one of heartbreak, resilience, and a fight for justice that could reshape the legal landscape for holding tech companies accountable.

John was just 13 years old when he fell victim to an online predator posing as a 16-year-old girl. Manipulated and blackmailed, he and a friend were coerced into creating explicit videos. Years later, these videos resurfaced on Twitter (now X), shared publicly for the world to see.

The humiliation was unbearable, especially when his classmates discovered the videos.

The shame and bullying drove John to the brink of suicide.

John and his mother reported the content to Twitter. They begged for the CSAM to be removed, even sending photos of John’s ID to prove that he was a minor. Twitter’s response left them heartbroken:

“We’ve reviewed the content, and didn’t find a violation of our policies, so no action will be taken at this time.”

The videos remained online for days, amassing over 167,000 views and 2,223 retweets before they were finally taken down—only after intervention from a family friend who happened to be a federal agent.

This case is not just about John.

It’s about a system that allows tech giants to evade accountability under the shield of Section 230 of the Communications Decency Act.

Originally designed to encourage responsible content moderation, Section 230 has been twisted into a near-blanket immunity for tech companies, even when they knowingly facilitate harm. Twitter’s refusal to act, despite clear evidence of CSAM, exemplifies the dangers of this unchecked power.

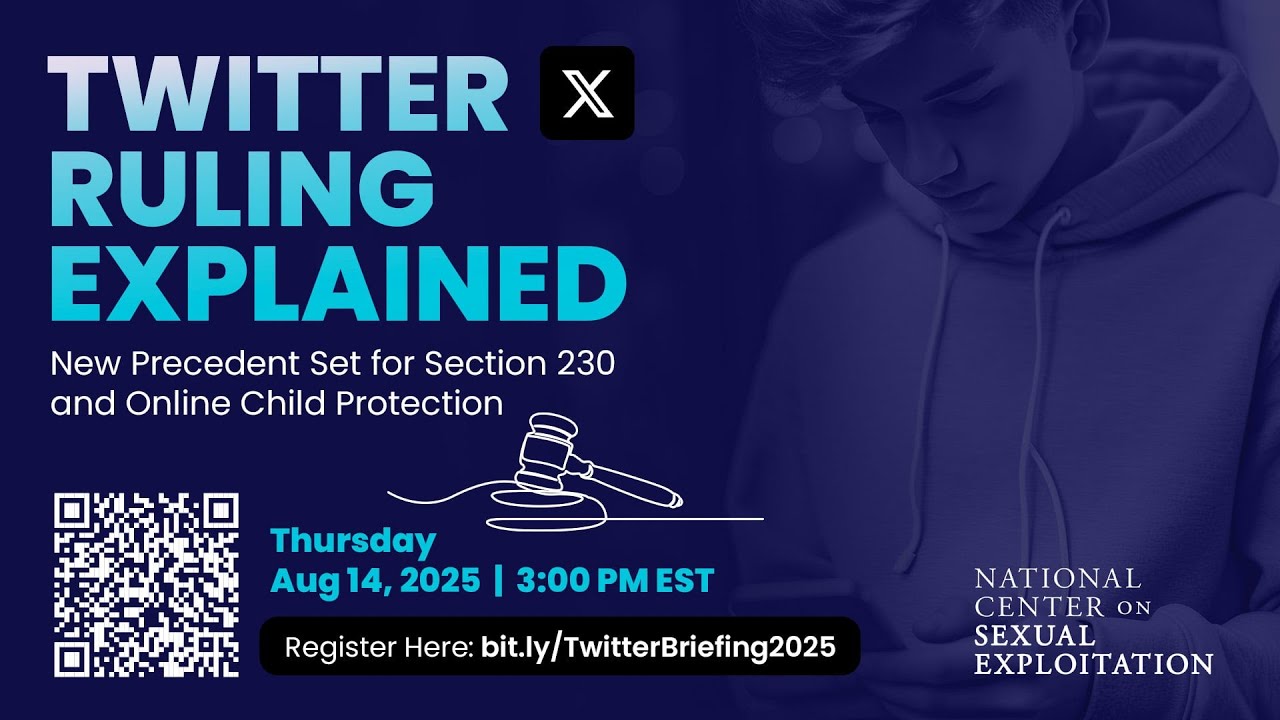

Why it’s time for the Supreme Court

The Ninth Circuit Court of Appeals recently ruled in John’s lawsuit that Twitter does not have Section 230 immunity for failing to report the CSAM of John to the National Center for Missing and Exploited Children (NCMEC) and that Twitter does not have Section 230 immunity for product defects that pertained to the difficulty of reporting CSAM. This is a significant victory as few cases have gotten this far.

However, the fight is far from over.

Unfortunately, the Ninth Circuit upheld parts of the District Court’s decision, granting Twitter Section 230 immunity on key claims. This included immunity for allegations that Twitter knowingly possessed and distributed CSAM, failed to remove it after being notified, and allowed its platform features, like search suggestions and hashtags, to amplify the content.

Most troubling, the court also ruled that Section 230 shielded Twitter from claims it knowingly profited from sex trafficking, despite the 2018 FOSTA-SESTA law explicitly designed to hold tech companies accountable in such cases. While FOSTA-SESTA was celebrated as a victory, courts have largely failed to enforce it as intended, with only one successful case to date.

This raises a critical question: if Twitter’s actions in this case don’t meet the threshold for liability under FOSTA-SESTA, what will? The takeaway is clear—piecemeal reforms to Section 230 are insufficient, as Big Tech continues to dilute their impact through lobbying, leaving survivors without justice.

It’s time for the Supreme Court to step in and provide clarity for the courts.

John’s story is a testament to the resilience of survivors and the power of advocacy. The Supreme Court must hear this case, not just for John, but for every child who has suffered in silence.

John Doe’s story does not exist in isolation. Tragically, child sexual abuse material (CSAM), and adult image-based sexual abuse (IBSA) are still consistent and rampant problems on X (formerly Twitter).

Proof: Evidence of Exploitation

WARNING: Any pornographic images have been blurred, but are still suggestive. There may also be graphic text descriptions shown in these sections. POSSIBLE TRIGGER.

Child sexual abuse material (CSAM)

X (formerly Twitter) is a popular platform used to advertise the ability to buy or trade child sexual abuse material (CSAM), connecting potential buyers to sellers who can then move conversations to encrypted links or messaging services. Many people will also natively upload CSAM directly onto X.

This is a historic problem tracing back for years.

X/Twitter has been named to the 2021, 2022, and 2023 Dirty Dozen List. Each year NCOSE has gathered research and proof about CSAM on the platform [available to journalists or policymakers who inquire], yet the core platform design issue at hand remains: X/Twitter officially allows pornography, yet fails to provide any meaningful age or consent verification to prevent child sexual abuse material or adult non-consensually shared sexual material (image-based sexual abuse).

X promotes that it engages in the NCMEC’s hashing system, which is a database of digital “fingerprints” of already-known child sexual abuse material that platforms can automatically detect and block when those same files are uploaded again. X also claims to develop its own hashing system for commonly reported materials. This is valuable because it helps stops the re-circulation of previously identified CSAM — but it’s also the bare minimum, since it can only catch images that are already in the database.

Because the material most sought by offenders is new, never-before-seen CSAM, hash-matching misses novel, new CSAM. This means platforms that permit massive volumes of sexually explicit content inevitably become high-risk environments where novel CSAM can be created, uploaded, and circulated before anyone has a chance to hash or report it.

X has a choice: it could either change its policy to no longer allow pornographic material and restrict all sexually graphic content, or it could institute advanced age/consent verification tactics to ensure no child or non-consensual material is ever uploaded to its platform. But X fails to do either. It allows largely unrestricted sexual uploads, with no robust verifications, and therefore it becomes a prime marketplace for abusive content.

It’s important to also note that in November 2025, X launched an end-to-end encrypted direct messaging system, now labeled “Chat” within the X app for premium users. The encrypted Chat can be used to send images, videos, and files to individuals or in group messages—all inherently high-risk activities for child sexual abuse material (CSAM) and other crimes. X admits that “it is not possible to report an Encrypted Direct Message to X due to the encrypted nature of the conversation.” [emphasis added.] Given the well-documented way encrypted chats are used to facilitate CSAM on Telegram and WhatsApp and other platforms, it appears X is accepting that its DMs will become a cesspool of CSAM.

“Man Sentenced to 17.5 Years in Federal Prison for Selling Child Sexual Abuse Material on Twitter”

U.S. Department of Justice (Southern District of Indiana)

Date: May 15, 2025.

Federal prosecutors say a man used publicly accessible Twitter (now X) accounts (Dec 2022–Mar 2023) to advertise and sell links to cloud folders containing hundreds of CSAM files including of victims under the age of 12. He posted thumbnails, organized files by victim-age categories, and provided payment handles (CashApp/Apple Pay) — i.e., he ran the operation openly on Twitter’s platform.

The DOJ press release describes Twitter/X as the online location the defendant used to reach buyers.

It notes: “Many of his advertisements themselves contained thumbnails of sexually explicit images …[He] advertised as if he was running a legitimate retail business. He organized the files based on various characteristics, such as the age of the minor victims, and he based the price on what he was offering. Material with younger victims garnered a higher price. He also created videos showing himself scrolling through the folders containing child sexual abuse materials in order to give his customers a preview.”

“San Antonio Man Sentenced to More Than 16 Years in Federal Prison for Distributing Child Pornography”

U.S. Attorney’s Office (Western District of Texas DOJ)

Date: March 7, 2025.

Court documents say a man operated a chat room and distributed CSAM using both Telegram and X (formerly Twitter). Investigators identified roughly 81 files posted between Dec 1, 2022 and Apr 30, 2023; the DOJ release explicitly names X as one of the channels used to distribute the material.

“Greenfield Man Charged with Sexual Exploitation of Children in Person and Online, via Instagram, Snapchat, and X (Twitter) Account maps.syb”

U.S. Department of Justice (Southern District of Indiana)

Date: March 21, 2024.

Prosecutors say a user (identified in filings as “maps.syb”) uploaded suspected CSAM to multiple social platforms — Instagram, Snapchat, and X (formerly Twitter) — and the National Center for Missing & Exploited Children (NCMEC) provided a tip to investigators.

The DOJ complaint ties uploads to the X account as part of the evidence used to charge the defendant.

It notes: “In addition to having sexually explicit conversations, [the man] was able to coerce the children to produce and send to him sexually explicit images and videos of themselves. [He] also allegedly arranged to meet minors in person to engage in sexual activity. HCMCETF discovered that [he] traveled to at least three different cities, one which was out of state, to have sex or attempt to have sex with underage children. In at least one of these instances, [he] sexually abused a 12-year-old girl. Further, [he] distributed videos and pictures of children that he had obtained from various victims to another child.”

“Discord User Who ‘Catfished’ Young Victims Is Sentenced to 21 Years in Prison for Production of Child Sexual Abuse Material”

U.S. Department of Justice (Western District of North Carolina)

Date: June 4, 2024.

In this case the defendant used multiple online services to groom and exploit victims — the DOJ states the offender produced and distributed child pornography via Discord and Twitter. The release highlights that Twitter was one of the social platforms used to contact victims and to distribute abusive material.

The man “used Twitter to communicate with minors and to receive child pornography created by the victims. In total, Wehrstein possessed more than 4,197 images and videos of child pornography. The investigation further determined that Wehrstein had induced at least eight male victims, between the ages of 12 and 17, to produce and send him child pornography via Discord and Twitter.”

Investigators Report About Sexual Abuse Cases on X

The International Protection Alliance (IPA)* produced a breaking report for the NCOSE Dirty Dozen List, summarizing multiple cases in which Twitter/X was used to facilitate, promote, monetize, and distribute sexual exploitation and abuse of minors. The cases illustrate recurring patterns of platform misuse, inadequate safeguards, and potential systemic negligence.

Investigators noted that “X makes up roughly 1/3rd of all our cases.” And additionally to the report below, they noted to NCOSE a rising trend of “offenders charging for grooming services where the buyer will nominate the social media accounts of target and the offender will groom them.”

*IPA is a 501c3 nonprofit that conducts open-source intelligence investigations to assist law enforcement agencies with actionable intelligence and prosecution-ready cases to dismantle networks of exploitation targeting those who harm children and minors.

Image-based Sexual Abuse (IBSA), “Revenge Porn,” and AI Deepfakes

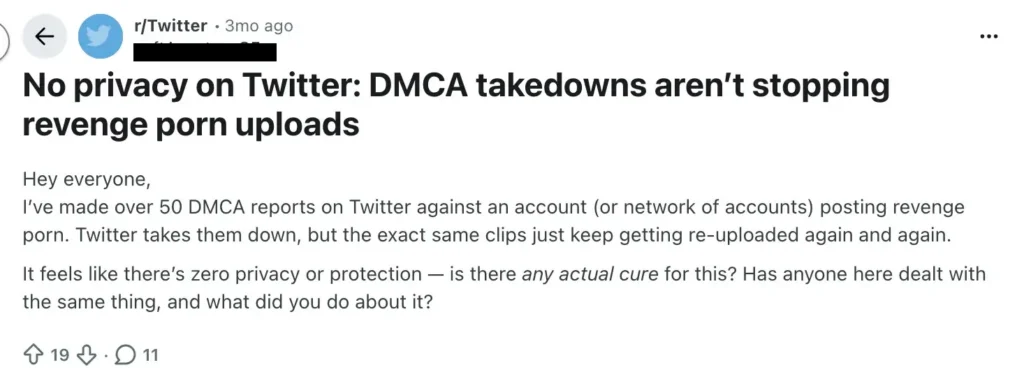

X (formerly Twitter) has become a well-known hub where people openly post non-consensually shared sexual content (sometimes called “revenge porn” but more accurately called “image-based sexual abuse (IBSA)”) making exploitation easy to post and hard for victims to escape.

This is a pattern that has persisted for years.

X/Twitter has appeared on the Dirty Dozen List in 2021, 2022, and 2023 because NCOSE has repeatedly documented widespread IBSA on the platform [materials available to journalists or policymakers upon request]. The underlying problem is straightforward: X/Twitter permits pornography but refuses to implement meaningful age or consent verification, creating an environment where abusive, non-consensual sexual content can flourish unchecked.

X faces a clear choice. It could end the rampant abuse by prohibiting pornographic material altogether and enforcing that policy, or it could adopt robust age-and-consent verification systems. Instead, it chooses neither path. By allowing nearly unrestricted sexual uploads with no meaningful verification, X allows image-based sexual abuse to thrive with impunity.

This platform choice has real victims.

For example, one woman’s ex-partner uploaded a sexually explicit video of her to X in 2025. In a police interview months later, the man admitted “100%” that he posted the video.

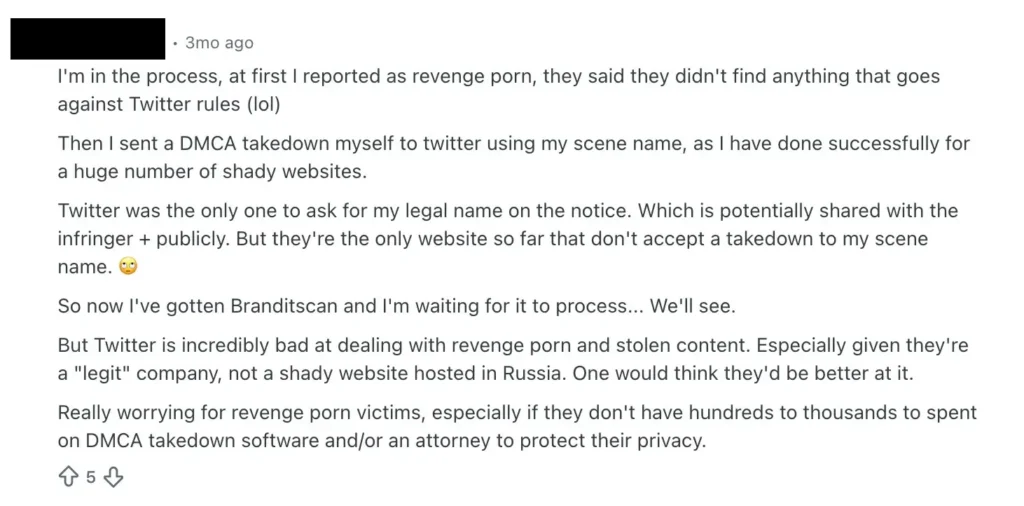

Also in 2025, many survivors have taken to online forums to request help or advice for IBSA content on X.

Another user posted:

“someone is sharing old private content related to me which was sent via private telegram chat only, meaning to public link the original content of me. The user has created a public page where they post all kinds of ‘adult’ content. I have reported it multiple times for privacy violations, but X keeps replying that it doesn’t break their (stupid) policy. My videos have been watched almost 200,000 times, and X is doing nothing.”

[Emphasis added, screenshot available to journalists or policymakers for verification upon request.]

Again, these problems have long histories. In 2024, a study exposed systemic problems with X’s reporting mechanisms. The study authors created 50 synthetic nude “deepfake” images and posted them on X (formerly Twitter), then submitted takedown requests using two different paths: (1) X’s built-in “non‑consensual nudity” reporting mechanism, and (2) a copyright‑infringement (DMCA) claim.

The results were shocking: every single image reported under the DMCA was removed — all within 25 hours. Meanwhile, none of the images reported under the non‑consensual nudity policy were removed over the full 21‑day monitoring period (zero removals).

X’s policies against non-consensually shared sexual content are not robustly, proactively enforced. In less than 2 minutes NCOSE researchers found clear violations of X’s policies including “leaked” (aka nonconsensual) explicit content. See next section below to learn more.

Survivors of Image-based Sexual Abuse Experience Significant Trauma

The Cyber Civil Rights Initiative conducted an online survey regarding non-consensual distribution of sexually explicit photos in 2017. The survey found that survivors of IBSA had “significantly worse mental health outcomes and higher levels of physiological problems,” compared to those without IBSA victimization. A previous analysis noted that such distress can include high levels of anxiety, PTSD, depression, feelings of shame and humiliation, as well as loss of trust and sexual agency. The risk of suicide is also a very real issue for those who are victimized; there are many tragic stories of people taking their own lives as a result of this type of online abuse. An informal survey showed that 51% of responding survivors reported having contemplated suicide as a result of their IBSA experience.

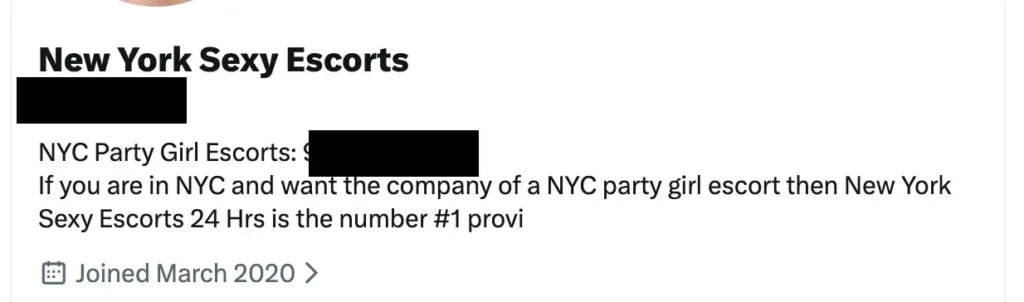

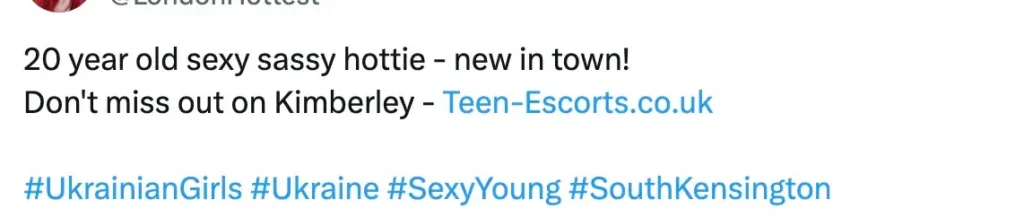

Prostitution / Sex Trafficking

Both X accounts and posts promote prostitution / sex trafficking. Some include disturbing red flags for sex trafficking such as promoting the phrase “new in town” alluding to a person that has fled a foreign country due to a conflict, like Ukraine. Immigrants are often victimized in sex trafficking due to economic and social vulnerabilities.

Allowing the promotion of prostitution on a social media platform inherently facilitates sex trafficking because the platform cannot determine whether any given post or account involves genuine consent or coercion. Traffickers exploit this invisibility, using online accounts, posts, and ads to reach potential buyers while hiding the abuse and manipulation behind curated profiles. By providing a public marketplace for sexual services, Twitter/X amplifies traffickers’ reach and profit potential, making it structurally impossible to prevent exploitation and turning every post into a potential vehicle for trafficking.

Further, it is an accepted fact, supported by survivors of sex trafficking, that pornographic pictures and videos are used to advertise for both prostitution and sex trafficking victims (including minors.) Further, law enforcement has long found that many sex trafficking victims, and child sexual abuse material (i.e. child pornography) victims, are coerced into creating livestream or webcam pornography as well. Accounts posting and selling pornographic content, from images to videos to livestreams, as well as requests for sugar baby/mommy dates, are undeniably flourishing on Twitter.

These examples are a small sampling and were found by NCOSE researchers in less than 8 minutes.

Additional material documenting this historic problem from 2017-2021 is available to journalists or policymakers upon request.

Are X Policies PR? Blatant Violations Found in Less Than 2 Minutes

X policies allow “pornography and other forms of consensually produced adult content…”

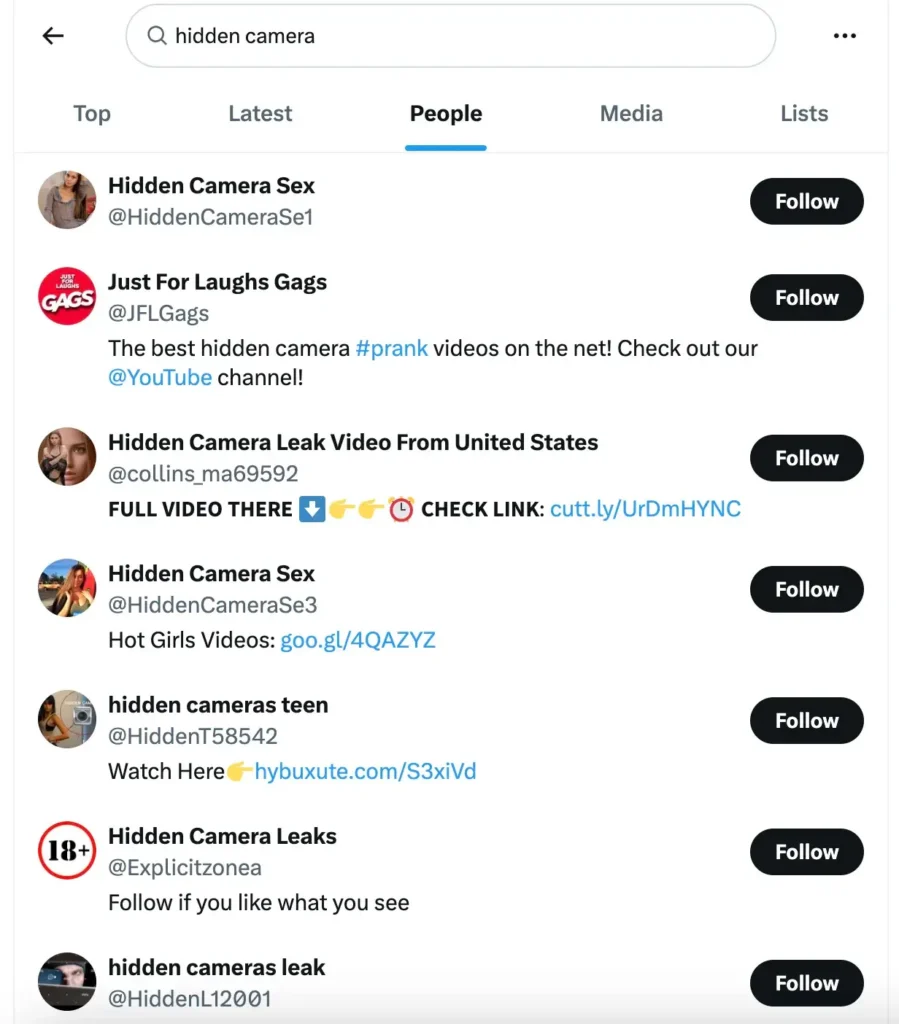

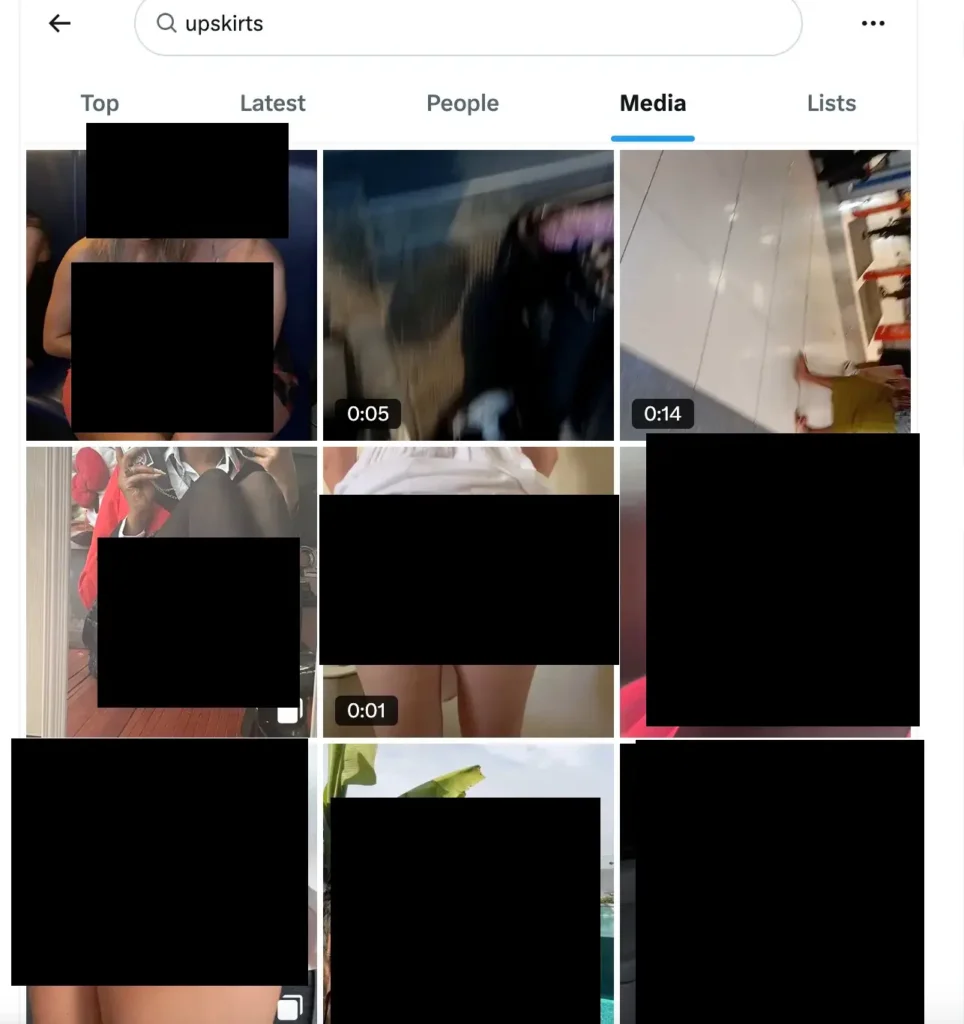

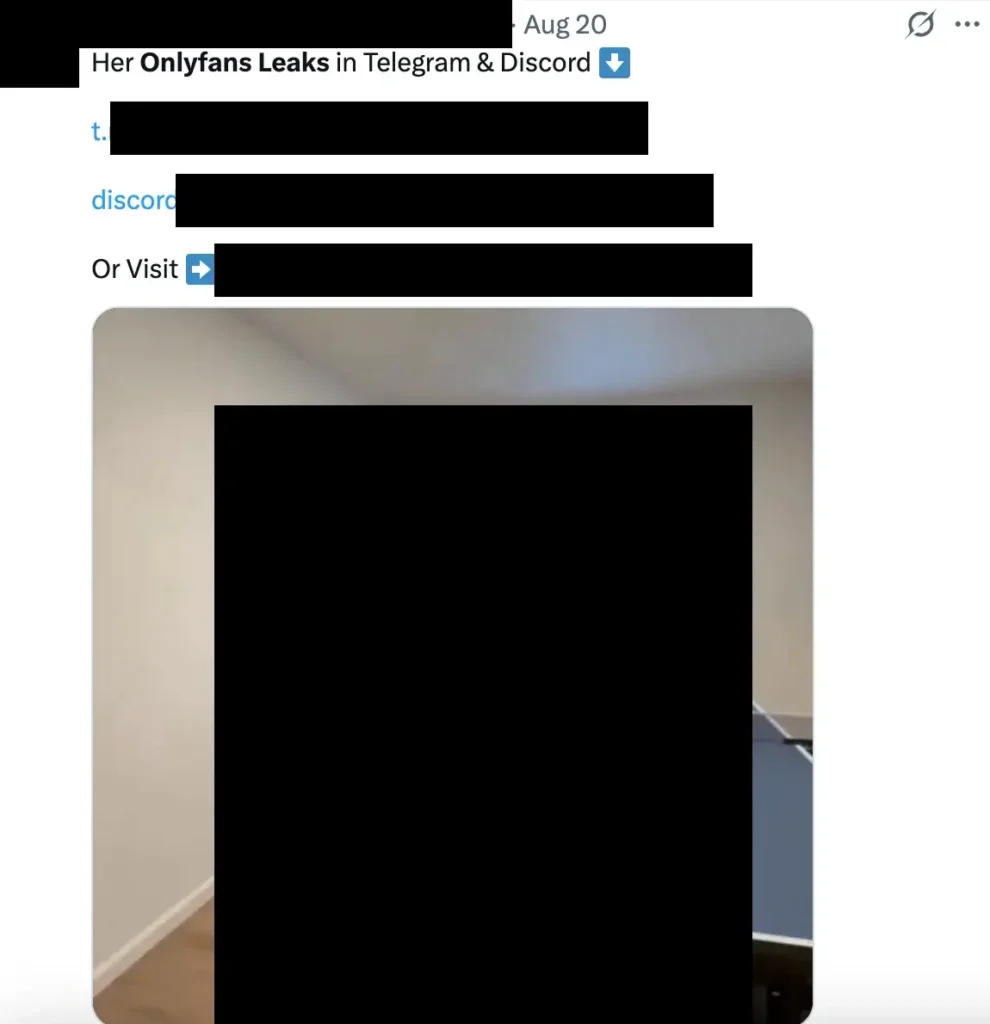

The policies do NOT allow non-consensually shared content, “hidden camera”, “creepshots or upskirts,” “digitally manipulat[ing] an individual’s face” for pornographic content, or any “bounty or financial reward” in exchange for sexually intimate images.

Unfortunately, policies on paper do not equate to policies in practice.

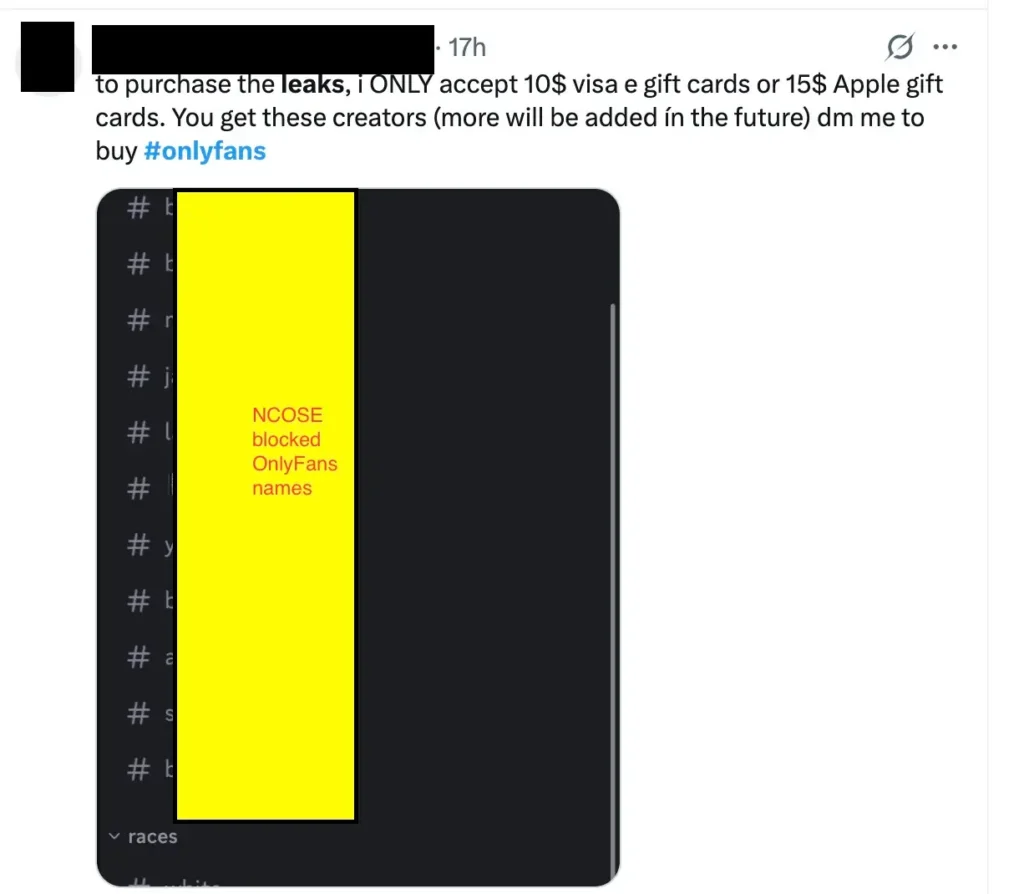

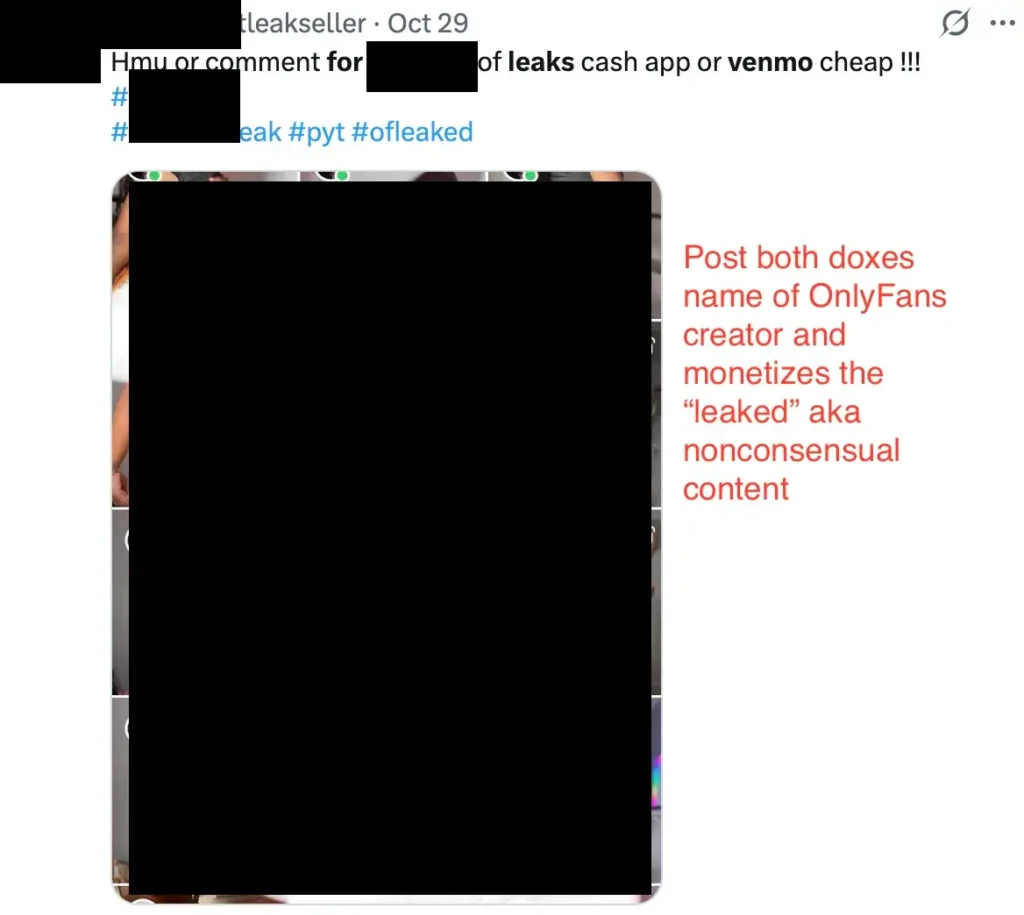

Blatant violations of X’s policies on hidden camera porn, creepshots/upskirts, and “leaked” porn [aka nonconsensual] were found in less than 2 minutes by a NCOSE researcher.

It is easy for a company to write out a list of what they do not allow, but if the company broadly fails to proactively enforce that policy, then in reality it amounts to PR.

X claims to not allow nonconsensual sexual material or promoting “financial reward” in exchange for it, yet with a simple search, it is immediately discoverable.

X claims to not allow “creepshots or upskirts” porn yet with a simple search, photos of just that are immediately discoverable.

X claims to not allow nonconsensual sexual material or promoting “financial reward” in exchange for it, yet with a simple search, accounts doing just that are immediately discoverable.

Fast Facts

#1 app flagged for severe sexual content by Bark. Of note, X was the #2 app flagged for severe sexual content the past two years (2023-2024), but bumped Kik out of the top spot in 2025.

A 2025 survey in the UK found X was the top online platform where children saw pornography (45%), even higher than exposure through dedicated pornography sites (35%). X was also the most common source by which children were exposed to AI-generated pornography (35%).

In a recent poll of 1,013 U.S. parents, when asked about their child’s experience growing up, 62% said they wish that X had never been invented. A similar poll of Gen Z adults (ages 18-27) found 50% wish X (formerly Twitter) had never been invented.

Resources

What To Do If You’ve Been Victimized by Twitter: See If You Qualify for a Lawsuit

NCMEC’s Take It Down service: Resource for minors to remove their sexually explicit content from online platforms

Report suspected child sexual exploitation to the National Center for Missing and Exploited Children (NCMEC) Cyber Tipline

Stop Non-Consensual Intimate Image Abuse (StopNCII) – Resource for adults to remove image-based sexual abuse from online platforms

The Bark Blog: Is Twitter Safe? An App Review For Parents

Protect Young Eyes: X / Twitter App Review and Parental Controls

In this free resource from the National Center on Sexual Exploitation, we explore the classifications and definitions of image-based sexual abuse. This includes details on terminology to reject and subcategories that are included under the IBSA umbrella.

Recommended Reading

NCOSE Law Center:

Petitions Supreme Court to Interpret Section 230 on the 30th Anniversary of its Passage

NBC News:

Accounts peddling child abuse content flood some X hashtags as safety partner cuts ties

Updates

Videos

Playlist

40:57

41:15

46:53

44:23

29:09

Share!

Help educate others and demand change by sharing this on social media or via email!

Spread the word to hold Big Tech accountable. Use these free resources to post on social media or share via email. Your voice can create change!