Predators Are Grooming Kids in Plain Sight

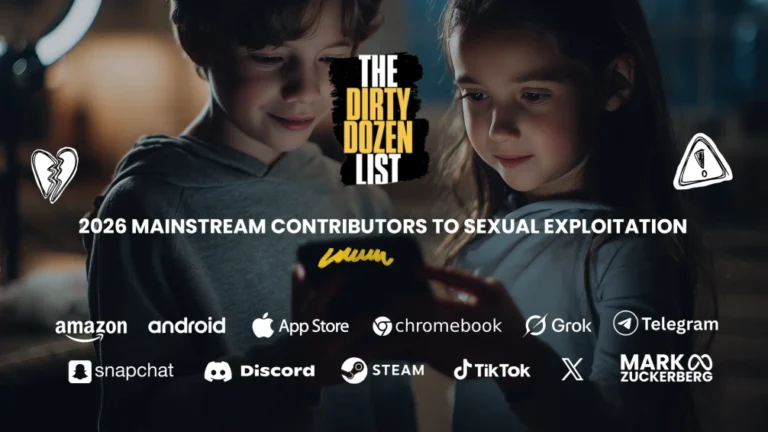

Why is TikTok on the 2026 Dirty Dozen List?

TikTok continues to fail at protecting children, letting predators use messaging, comments, and livestreams to groom and exploit minors. Internal documents show the company knew the risks but did too little to stop them. Meanwhile, online pimp “talent agencies” are using TikTok to funnel users into pornographic content creation.

"TikTok has created an environment where predators thrive, using livestreams, comments, and private messages to groom and exploit minors."

- NCOSE Researcher

The Problem

“We’ve created an environment that encourages sexual content,” someone at TikTok admitted, according to internal documents.

TikTok has made several safety updates we’ve requested over the years, for which we are grateful. And, for a while, this earned their absence from the Dirty Dozen List. However, as evidence compiles that sexual exploitation still runs rampant on TikTok, while they fail to take adequate action and even introduce more dangerous tools, we are once again calling them out.

It has become clear that, despite their previous changes, TikTok has created an environment where predators thrive, using livestreams, comments, and private messages to groom and exploit minors.

- A NCOSE researcher spent 15 minutes on a 15-year-old test TikTok account and discovered over 50 posts and comments that had high risk indications of child sexual abuse material (CSAM) trading and networking.

- Internal documents revealed TikTok profited from “transactional gifting” involving nudity and sexual activity AND that TikTok is aware its LIVE recommendation algorithm “prefers feeds with gifts,” which it admits “incentivizes sexual content.” This design is extremely high risk for commercial sexual exploitation and/or grooming.

- A global child-pornography ring used TikTok as a marketplace to advertise, solicit, and purchase over 1 million CSAM files, with an undercover agent uncovering 6.7 terabytes of material.

- Children have been targeted on TikTok and groomed for sexual abuse or sextortion, even resulting in one 14-year-old boy’s death.

- And despite being marketed on the Apple App Store as safe for children 12+ years old, first-hand accounts from former recruiters and multiple investigations reveal TikTok functions as a recruitment pipeline for the pornography site OnlyFans.

Meanwhile, the platform’s new private messaging features released in 2025 is available to minors 16+ years old—complete with voice notes and photo sharing—which is a new path for abusers to isolate and manipulate young users. Even with promised safeguards like nudity-detection filters and limits on first-contact messages, TikTok is introducing tools that make it easier for abusers to initiate intimate conversations, pressure teens for imagery, and escalate exploitation behind closed doors.

TikTok’s Flip-Flop:

See what their Head of Safety said in 2020 compared to new features they rolled out in 2025…

In fact, compare these features to just a few years ago. TikTok’s Head of Safety wrote in 2020:

“Unlike other platforms, we don’t permit images or videos to be sent in comments or messages. This was a deliberate decision on our part: studies have shown that a proliferation of Child Sexual Abuse Material has been linked and spread via messaging. TikTok was built to provide a positive place for creativity and we prioritize the safety of our users. From the very beginning we chose not to allow users to upload photos or videos to their messages.”

Now, TikTok has reversed this stance by rolling out the photo-sharing capabilities in comment sections around mid-2025 and in DMs starting late 2025 (with features like sending up to nine images/videos per message). This is a significant and disturbing shift away from safety-by-design towards profit-by-interaction.

While TikTok has placed some important restrictions on direct messaging for minors, including having it off by default for 16 and 17-year-olds (they can still turn it on) and disabling the feature for 15 and under, this does not protect minors entirely. Firstly, because older teens are often at even higher risk of sexual exploitation than younger children. Secondly, because there have been reports of “hundreds of thousands of children” bypassing TikTok’s age restrictions. Not to mention, in addition to direct messaging, adult strangers can still reach children on TikTok through comments, public posts, and livestreams, where real-time interactions and virtual gifts create opportunities for grooming and exploitation.

TikTok’s Predator Problem:

See what law enforcement and child-safety advocates have to say about TitTok’s insufficient protections…

Law-enforcement cases show predators continue to use TikTok’s messaging, comments, and especially livestreams to initiate grooming, solicit sexual images, and coerce minors into harmful interactions. And large sextortion investigations also list TikTok among the platforms leveraged by offenders, demonstrating that predators see the app as an easy entry point to children due to weak front-line protections. [See Proof below.]

Compounding this, reporting and child-safety advocates have raised concerns that certain third-party “talent management” or “marketing” agencies (aka online pimps) exploit TikTok to recruit vulnerable teens and young adults into commercial sexual content ecosystems, including directing them toward platforms like OnlyFans. Combined with TikTok’s insufficient protections around livestreams and discovery, this creates a pipeline where minors and young adults can be targeted, groomed, and funneled toward sexual content creation.

It’s time for TikTok to do better.

Who Owns TikTok?

Learn more about the forces behind TikTok and who has responsibility for trust and safety…

In January 2026, TikTok created TikTok USDS Joint Venture LLC—a new, majority American-owned entity designed to meet U.S. national security requirements and avoid a potential ban. ByteDance, TikTok’s Chinese parent company, now holds a 19.9% minority stake, while U.S. and allied investors—including Oracle and Silver Lake—control the majority. This U.S.-based entity is responsible for overseeing American user data (stored with Oracle), the recommendation algorithm (which is licensed but secured), content moderation, and day-to-day operations under a majority-American board.

From a child safety perspective, this new structure puts responsibility for trust and safety policies, content moderation, and reporting on harms like sextortion, grooming, and exploitation squarely in the hands of the U.S. entity. That creates a real opportunity to set a higher bar for prevention and safety. So far, however, there’s little indication that core platform features, algorithms, or moderation practices have meaningfully changed.

Proof: Evidence of Exploitation

WARNING: Any pornographic images have been blurred, but are still suggestive. There may also be graphic text descriptions shown in these sections. POSSIBLE TRIGGER.

Livestream Video and Virtual Currency Facilitating Child Exploitation and Sex Trafficking on TikTok

Media investigations and legal complaints are uncovering shocking facts about how TikTok has facilitated and even profited from sexual exploitation on livestream.

One example of this is a 2025 BBC investigation that documented accusations that TikTok profited from child sexual exploitation livestreaming. The BBC stated: “three women from Kenya contacted the BBC, disclosing that they had begun producing sexually explicit content in exchange for digital gifts during their teenage years. Additionally, they revealed that TikTok actively promoted and facilitated payment negotiations for more explicit material, which was then distributed and monetized across other platforms.”

Another example is a legal complaint filed by the Utah Division of Consumer Protection against TikTok, accused TikTok of profiting from manipulative design features and its virtual currency system, which it stated facilitate child exploitation, sex trafficking, and other illegal activities through its “TikTok LIVE” feature. The complaint highlights TikTok’s failure to implement adequate safeguards and prioritization of profits over user safety, particularly for minors.

Below are quotes from the full complaint which can be accessed here. Emphases added:

Through a feature called “TikTok LIVE,” users can stream live videos of themselves and interact with viewers in real time. Combined with TikTok’s virtual currency system, this feature allows adults to prey on children in many egregious ways, including by transacting with and soliciting sexual acts from minors. Despite knowing and facilitating these dangers, the company turns a blind eye because LIVE has helped make TikTok very rich.

…TikTok has long known—and hidden—the significant risks of live streaming, especially for children. By TikTok’s own admission: “we’ve created an environment that encourages sexual content.”

…

In early 2022, TikTok’s internal investigation of LIVE, called “Project Meramec,” revealed shocking findings. Hundreds of thousands of children between 13 and 15 years old were bypassing TikTok’s minimum age restrictions, hosting LIVE sessions, and receiving concerning messages from adults. The project confirmed that LIVE “enable[d the] exploitation of live hosts” and that TikTok profited significantly from “transactional gifting” involving nudity and sexual activity, all facilitated by TikTok’s virtual currency system. Worse yet, TikTok knows that its LIVE recommendation algorithm “prefers feeds with gifts,” which it admits “incentivizes sexual content.” This means TikTok knows that users are more likely to exchange Gifts for sexualized acts and content and is aware it is promoting sexual exploitation on its app. TikTok admits: “Transactional sexual content incorporates tons of signals that inform the algorithm as well as Live Ops metrics of success . . . .” Despite this, TikTok looks the other way because sexually exploitative content boosts business: “[t]ransactional sexual content hits most of [TikTok’s] business’ metrics of success & is pushed to TopLives.” Despite admitting internally that LIVE poses “cruel[]” risks to minors— encouraging “addiction and impulsive purchasing of virtual items,” leading to “financial harm,” and putting minors at “developmental risk”—TikTok continues to use manipulative features to increase the time and money users spend on the app. TikTok has added additional monetization features like “exclusive” Gifts and subscription-based services, where users can subscribe to their favorite live streamers for a monthly fee. TikTok does not disclose its cut from subscriptions but pushes users to buy-in for an even “closer” connection with streamers, promising perks like “subscriber-only chat.” Any responsible company would shut down a feature if it facilitated children being exploited and adults paying children for sexual acts. But TikTok is too hooked on LIVE’s massive revenue stream. Instead, in response to reports of rampant sexual exploitation on LIVE, TikTok has made superficial changes it knows does not solve the problem.

…

TikTok admits that sexual exploitation and illegal activities on LIVE are “controversial” and worsened by its own monetization scheme. Despite acknowledging internally that “sexually suggestive LIVE content is on the rise,” TikTok refuses to warn consumers about these dangers. Instead, TikTok plans to “make better use of monetization methods such as gifting and subscription to gain revenue…

… age restrictions [for going Live on TikTok] are nothing more than hollow policy statements. Despite what TikTok claims, it refuses to enforce meaningful and effective oversight of users’ ages. TikTok knows many users lie about their age and it does little to ensure its policies are adequately enforced and effective.

… because of TikTok’s financial motivators and algorithmic boosters, TikTok LIVE has become a seedy underbelly of sexual exploitation.

… In February 2022, two TikTok leaders discussed the need to remove “egregious content from clearly commercial sexual solicitation accounts,” and were aware of issues with women and minors being sexually solicited through LIVE. Even more insidious, these leaders knew about agencies that recruited minors to create Child Sexual Abuse Material and commercialized it using LIVE. In another example from a March 2022 LIVE safety survey, users reported that “streamer-led sexual engagements (often transactional) [were] commonly associated with TikTok LIVE.” Users also reported “often seeing cam-girls or prostitutes asking viewers for tips/donations to take off their clothes or write their names on their body . . . .” That same month, TikTok employees admitted “cam girls” (or women who do sex work online by streaming videos for money) were on LIVE and that these videos had a “good amount of minors engaging in it.” TikTok leaders have known since at least 2020 that TikTok has “a lot of nudity and soft porn.”

… In 2023, U.S. users reported in a survey that 13.7% of LIVE streams contained what TikTok calls “adult nudity and sexual activity”—1.9% higher than the rest of the world. At the same time, TikTok cannot guarantee that this reported “nudity and sexual activity” on LIVE is actually performed by adults, rather than children. 150. As TikTok employees recognized, the company “created an environment that encourages sexual content . . . . [The] Live Recommendation algorithm prefers feeds with gifts, so [it] incentivizes sexual content.”

“Child Porn”/CSAM Rings, Sextortion, and Child Sexual Exploitation on TikTok

Below are a small sample of recent sexual abuse cases involving TikTok.

Florida men charged in ‘truly heinous’ global child porn ring involving over 1M files

2025 – Fox News

Authorities in Florida arrested a group of men after uncovering a global child pornography ring — the suspects were found to have purchased child sexual abuse material (CSAM) through advertisements on TikTok. The ring reportedly involved over 1 million videos and images, with an undercover agent acquiring 6.7 terabytes of material, showing how predators used TikTok not just for contact or grooming, but as a marketplace gateway to locate, solicit, and purchase CSAM. The men face charges including conspiracy, purchase of CSAM, and use of communication devices to facilitate their crimes. According to law enforcement statements, TikTok ads played a direct role in facilitating access to this content — exposing how social media platforms can be exploited as distribution or recruitment hubs for global child abuse networks.

Boy, 14, thought he was flirting with woman online before being found dead 35 minutes later

2025- Ladbible

A 14-year-old boy met another person on TikTok who he believed to be a 14-year-old girl, but tragically became the victim of a sextortion scam. According to the media reports, this sextortionist flirted with and groomed the boy to move their conversation to Snapchat where they exchanged nude images, and the sextortionist began extorting him for money. After the 35-minute exchange, he tragically died by suicide. “They made him feel like his life was over as he had made this mistake,” said his mother.

Minnesota man traveled to Virginia for ‘illicit acts’ with teen he met on TikTok: police

2025 – KFOX14

A man was arrested after traveling across the country to meet a 15-year-old girl he had first connected with on TikTok. According to police, the interaction began on the platform, where he initiated contact and then continued private communication that escalated into grooming and plans for an in-person encounter. When he arrived to meet the teen, officers intervened and arrested him on charges including solicitation of a minor and production of child sexual abuse material. The case highlights how TikTok served as the entry point for the exploitation, enabling a predator to identify, contact, and groom a minor before attempting to move the relationship offline.

BM Boys: the Nigerian sextortion network hiding in plain sight on TikTok

2025 – The Guardian

On TikTok, the BM Boys — a Nigerian sextortion network — publicly flaunt cash, cars, designer clothing, and other signs of wealth gained through blackmailing minors, primarily teenage boys in the U.S. and other Western countries. TikTok functions as both a recruitment tool and a promotional platform: videos showing luxurious lifestyles attract followers who then seek to join the network, effectively training and onboarding new scammers. The platform amplifies the appeal of the scheme, normalizes criminal behavior, and indirectly facilitates sextortion by helping the perpetrators build influence and entice others to target victims

East Alton Man Charged With Grooming Children Via TikTok

2024 – RiverBender

A 28‑year-old man was charged with two counts each of grooming and indecent solicitation of children. Court documents allege he used TikTok to contact two minors. The messages allegedly became sexual, discussing intercourse or oral sex, and the suspect attempted to groom and solicit the minors.

Ohio man sentenced to 5 years after ‘grooming’ underage girl

2024 – FOX 19NOW

A 33-year-old man was sentenced after pleading guilty to “Procuring or Producing Use of a Minor by Electronic Means.” According to court documents, he met a 12‑year-old girl on TikTok in March 2023 while he was pretending to be 17, then moved their communications to texting. Over thousands of pages of messages, he groomed the girl, coaxed her into sharing nude photos, sent her gifts and a necklace, and engaged in sexual communication. “[He] was a classic case of grooming,” Major Philip Ridgell of the Boone County Sheriff’s Office told FOX19 NOW on Tuesday. “He knew she was 12 years old because she had explicitly said, ‘I am 12 years old.’”

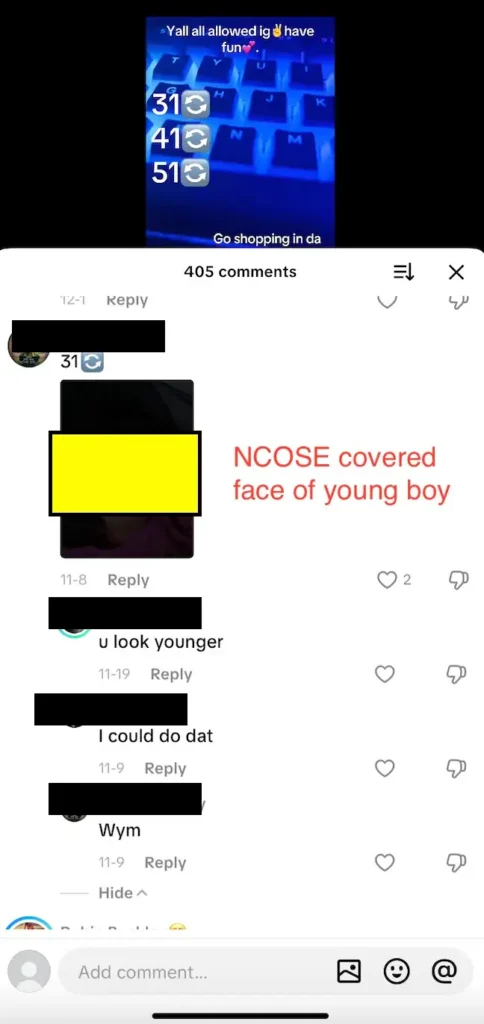

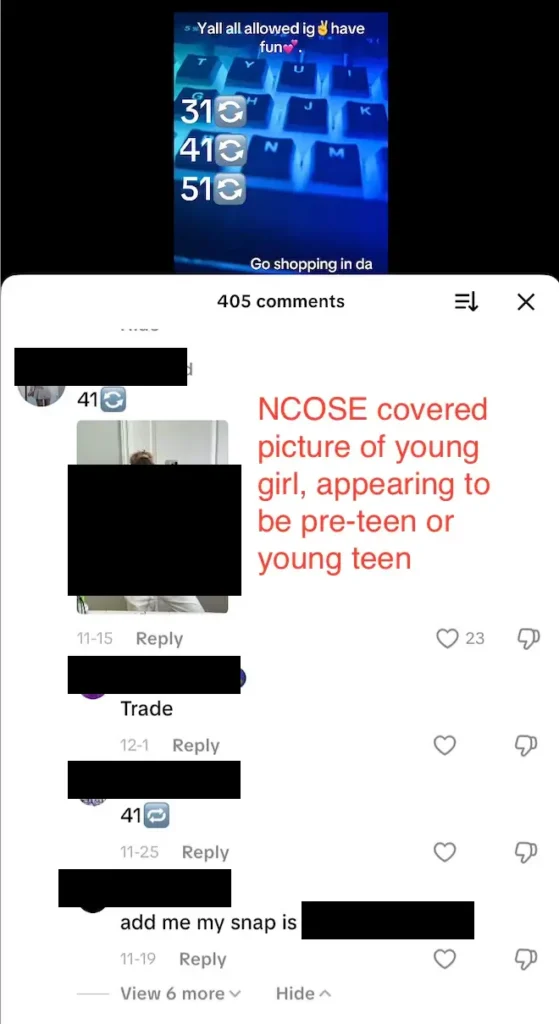

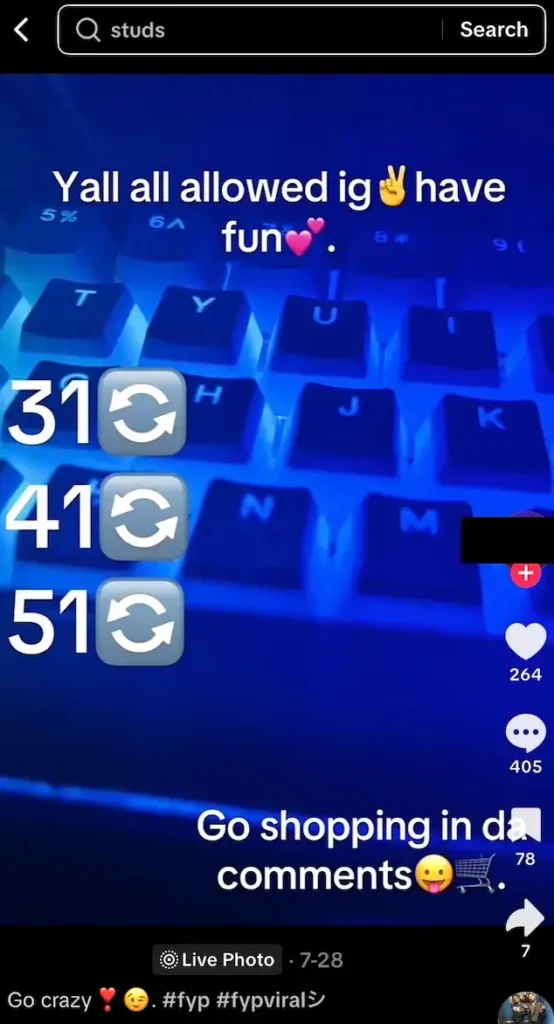

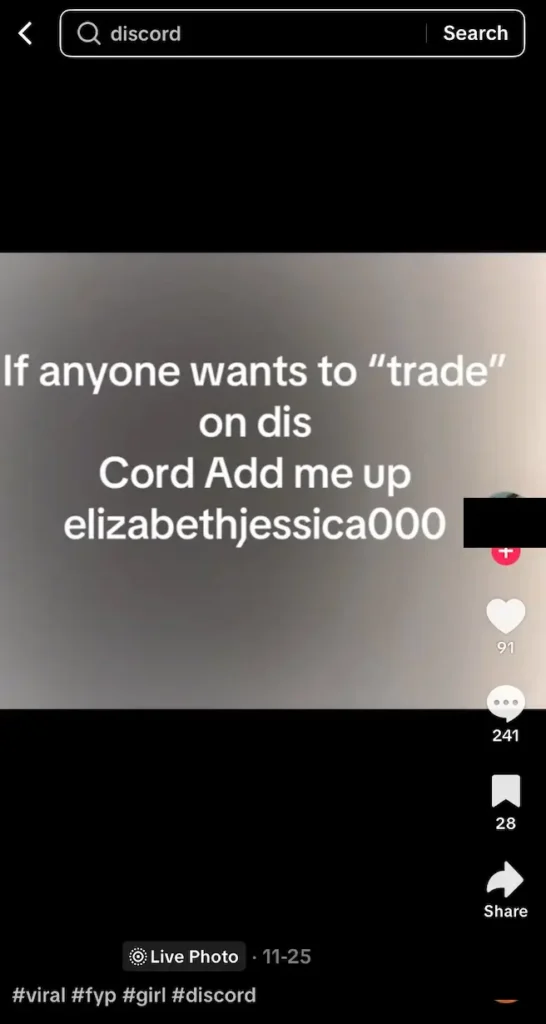

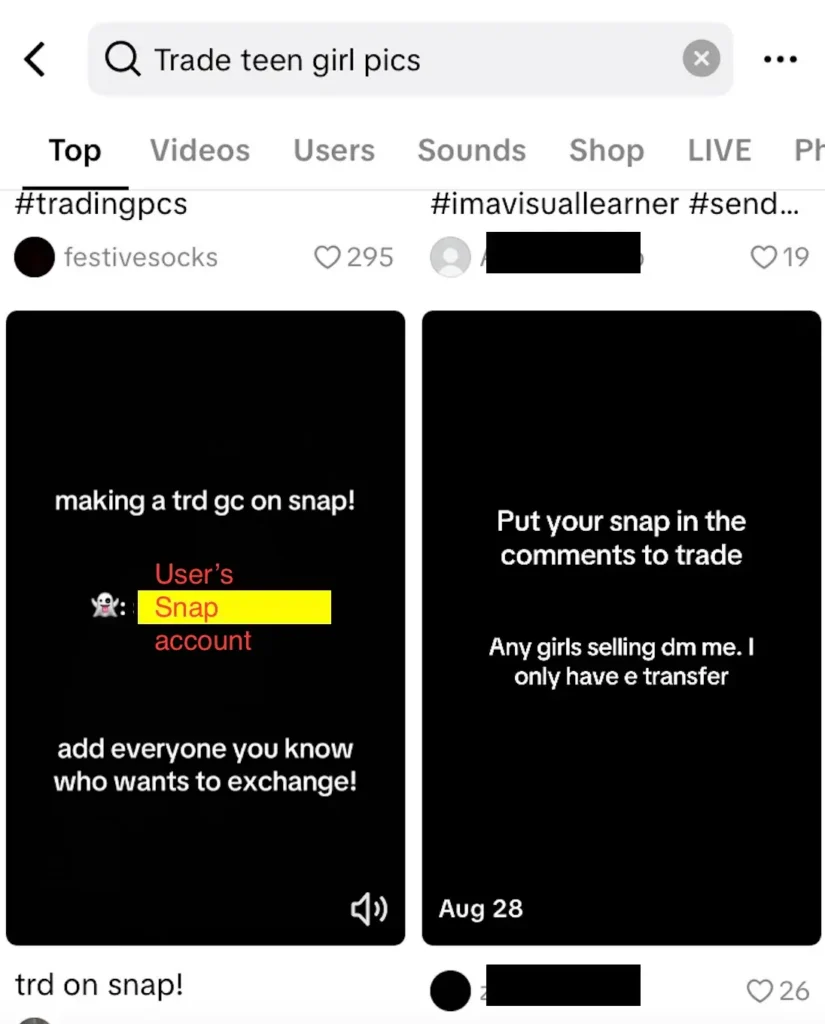

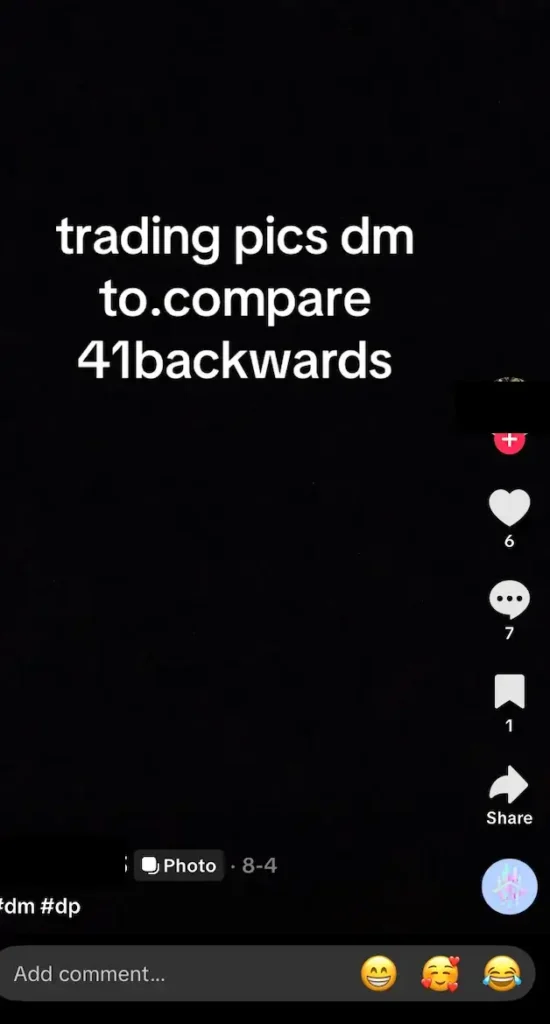

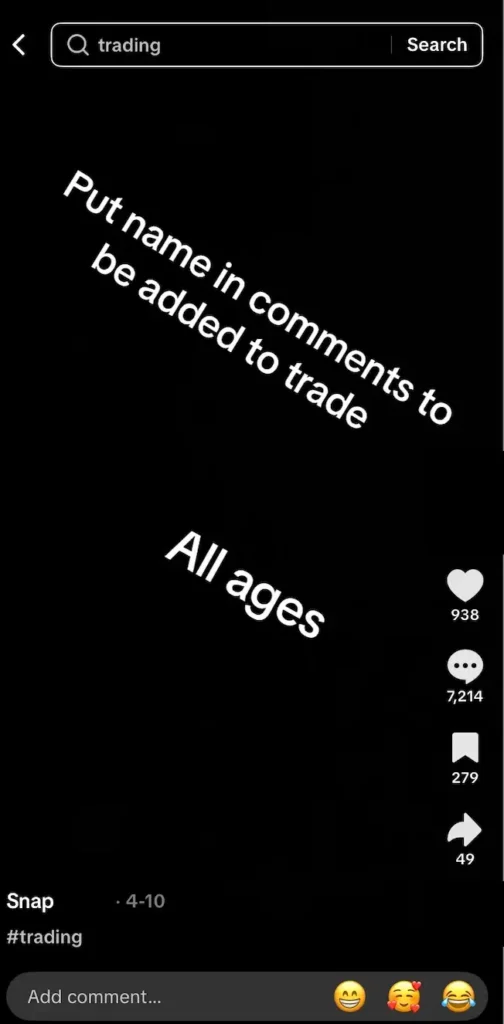

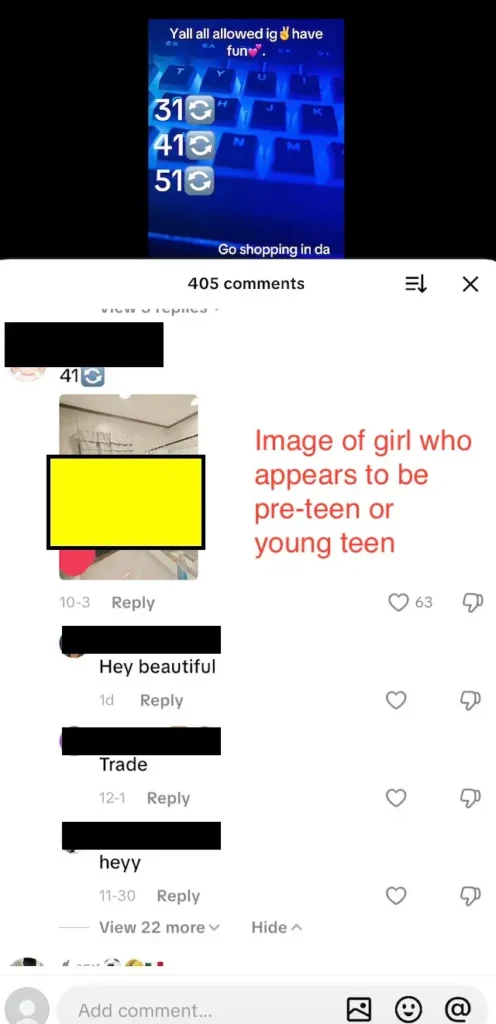

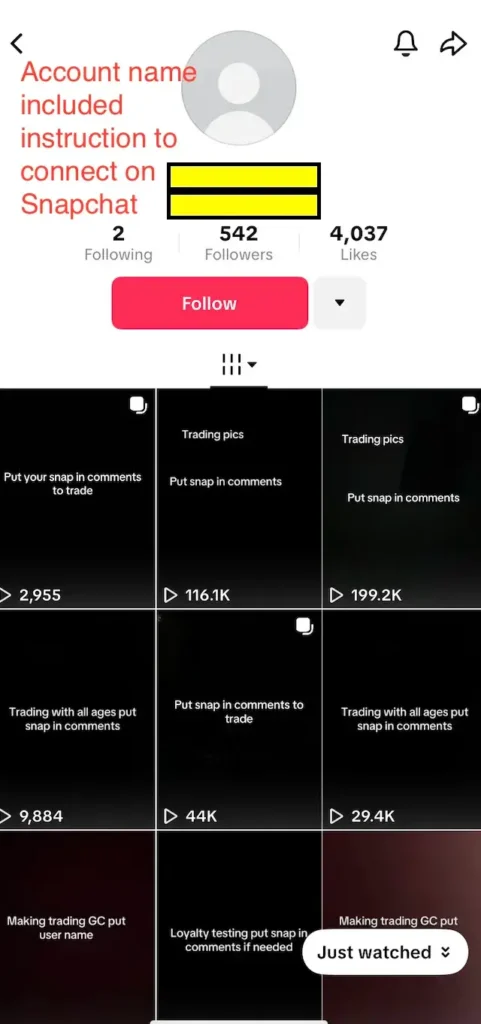

NCOSE Finds 50+ High-Risk CSAM Trading Posts in Just 15 Minutes on a 15-Year-Old Test TikTok Account

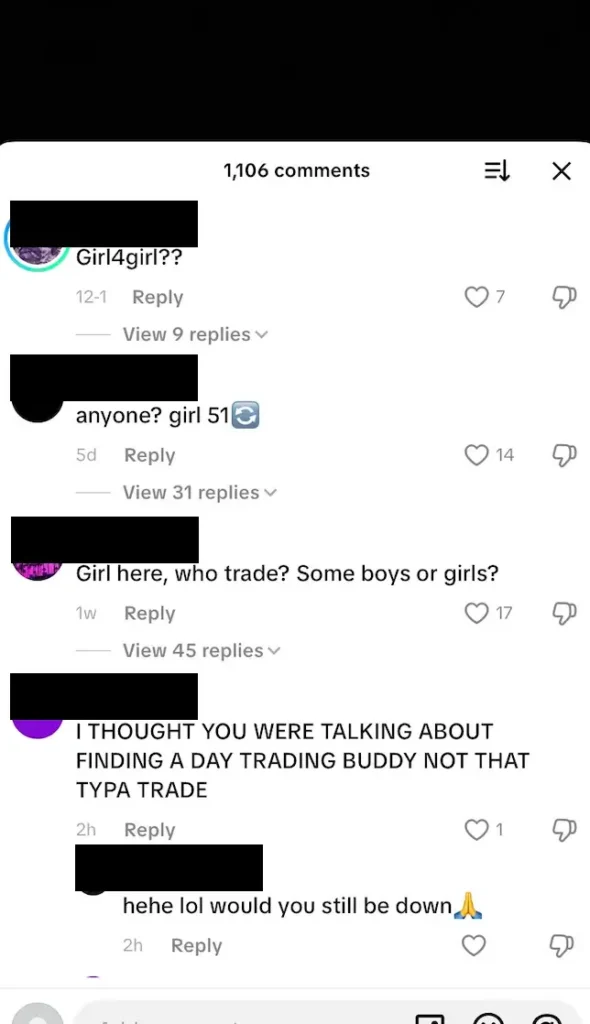

A NCOSE researcher spent 15 minutes on a 15-year-old test TikTok account and discovered over 50 posts and comments that had high risk indications of child sexual abuse material (CSAM) trading and networking.

High risk flags included pictures of obviously underage boys and girls being shared in comments with requests to “trade” or connect on Snapchat for more images. Some posts encouraged users to network in the comment section, for example one stated, “Go shopping in da comments” where other users then shared pictures of obvious minors and others would comment beneath those pictures with requests to trade, direct message on TikTok, or move over to Snapchat.

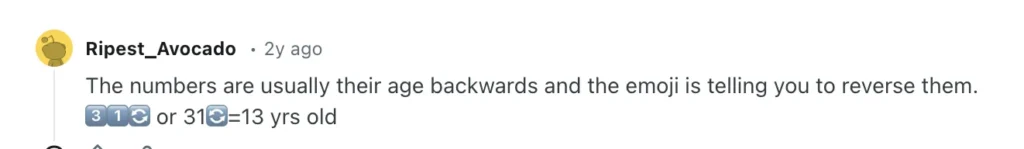

Many posts advertised trading for images with numbers like 31, 41, 51 followed by the reverse emoji 🔄, which in context indicated reversing the numbers to instead read 13, 14, 15, etc. Some accounts even explicitly noted this numeric reversal stating “41 backwards.”

This trend of the reverse age indication has been documented on other platforms like Reddit.

Below are a sample of collected screenshots.

TikTok to OnlyFans: A Recruitment Tool for Pimps

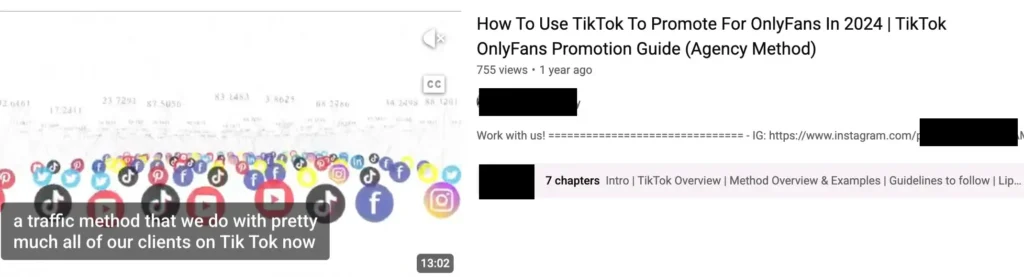

TikTok functions as a recruitment pipeline for the pornography site OnlyFans. Not only does its algorithm appear to amplify sexualized and “get rich” style content, but also “management teams” (aka online pimps) actively use TikTok (including off-platform redirects and DMs) to find and onboard new OnlyFans creators, and to market OnlyFans accounts to porn users.

First-hand accounts from former recruiters and multiple investigations show this combination produces large-scale funneling and creates serious risks for young and vulnerable people

One of the most famous examples of this pipeline is the “Bop House.” A Miami New Times article investigates the Bop House, a Fort Lauderdale mansion where a collective of OnlyFans creators live and produce content. Created originally by two TikTok-famous creators, this “house” operates very much like a social-media “hype” house: rooms are converted into photo/video studios, and common areas like the pool serve as backdrops for shoots. The creators are living in a high-cost setup (reportedly $75,000/month rent), but they monetize heavily via OnlyFans, making the property into a content production hub more than just a shared home.

Critically, the article underscores how TikTok acts as a recruitment and marketing vehicle for this business model. Rain and Sofey first announced the house in a TikTok video, signaling its purpose to their large following. After internal tensions, Rain later said the environment “began to feel controlling,” with others “trying to dictate how I should act and post.” Meanwhile, when members left, the Bop House ran a viral online competition to replace them — drawing in some 12,000 applications. This shows how TikTok helps funnel interested creators not just into individual OnlyFans accounts, but into structured, commercialized ecosystems.

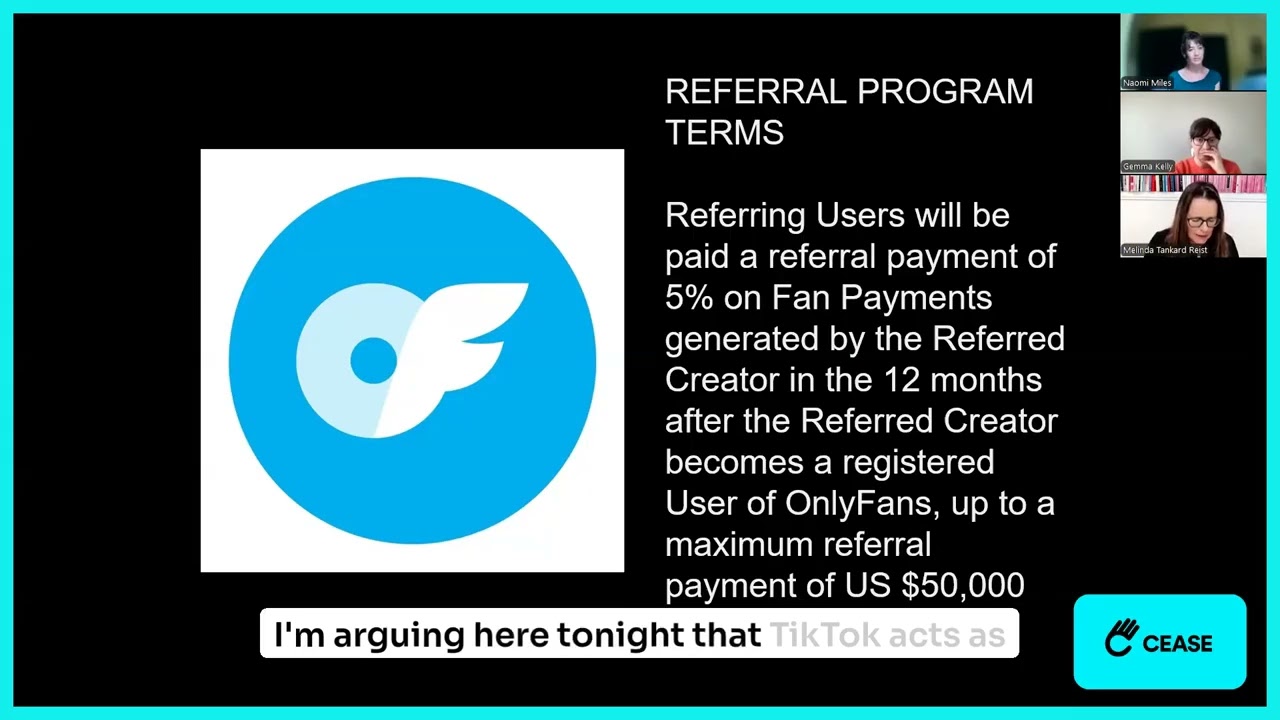

Collective Shout has done important analysis on this issue, including documenting how a former marketer and recruiter for an OnlyFans agency named Victoria revealed how she and her colleagues used TikTok to funnel young women into pornographic content creation. She explains that part of her role was to “leverage TikTok, Instagram, Reddit … and other websites to funnel paying customers ultimately to these girls’ accounts.”

She explained that the agency pressured creators to make “suggestive TikToks,” even when they weren’t comfortable, because it was “the only way to gain more subscribers … and money.”She also describes a system of escalating content levels, from bikini photos to “everything, no rules.” Over time, the agency prioritized “young girls … who looked younger than they were” (advertised as “barely legal”), because that demographic drove more revenue.

This pipeline is particularly concerning, given that TikTok is marketed as a safe app for children 12+ years old via the Apple App Store.

NOTE: OnlyFans Has A Systemic Child Abuse, Sex Trafficking and Image-based Sexual Abuse Problem

As OnlyFans has grown in notoriety in the past few years, so has recognition that it is a platform being used for exploitation and criminal activity. OnlyFans has rightly faced increased scrutiny by police, policymakers, and the press for evidence of child sex abuse material (CSAM, the more apt term for child pornography), sex trafficking, child online exploitation, harassment, doxing, cyberstalking and image-based sexual abuse and a host of other harms and potential crimes.

Though OnlyFans claims to have instituted robust age and consent verification, evidence would suggest that these measures have been insufficient to rid the platform of crimes. In 2023, when NCOSE researchers searched public Discord servers using the keyword “onlyfans,” a third of the results tagged with OnlyFans contained high indicators of child sex abuse material.

Panel: OnlyFans- A Detective Looks at OnlyFans

OnlyFans: A Case Study of Exploitation in the Digital Age

The Lure of Lies: Grooming and Recruitment in the Sex Trade

Requests for Improvement

Enforce policies and Community Guidelines consistently and thoroughly.

Enforce policies and Community Guidelines consistently and thoroughly.

In many ways, TikTok’s policies and Community Guidelines are very strong on paper, but enforcement of those policies in practice continues to be a chief concern.

Turn Restricted Mode ON by default for all minors

Turn Restricted Mode ON by default for all minors in order to reduce exposure to sexual content. As of March 2025, Restricted Mode is OFF by default and it takes 5 steps to turn it on.

Provide caretakers and minors more resources to manage their accounts and enhance safety.

Provide caretakers and minors more resources to manage their accounts and enhance safety, such as prompts upon account-creation and embedded PSAs throughout the app to teach users to identify and report inappropriate or abusive behavior (sextortion, sexual harassment, grooming, etc.)

Halt LIVE video feature until the emergency of livestreamed child sexual abuse material and adult sexual content currently occurring there can be effectively prevented.

Remove DM feature for minors

Remove the direct messages feature or at minimum restrict direct messages to those who verify they are over 18 years old through robust age-verification technology at the highest sensitivity setting.

Increase the age of default safety settings to 18 years old. All minors deserve protection by default.

Improve proactive and permanent removal of accounts with posts or comments that are high-risk for promoting the sharing or selling of CSAM.

Improve proactive and permanent removal of accounts promoting or recruiting for commercial sexual content or activity, such as OnlyFans.

Adjust Apple App rating from 12+ to 17+ and Google Play from “Teen” to “Mature” to more accurately reflect the content on TikTok

Fast Facts

A recent poll of 1,013 U.S. parents found that among kids who currently used TikTok, 57% were doing so before the age of 12.

In 2024, TikTok was in the top five platforms where minors reported having an online sexual experience (11%), following Facebook, Instagram, and Snapchat.

Apple App Store: 13+; Google Play: T (Teen); Bark: 15+; Common Sense Media: 15+; TikTok Policy: 13+ (but has a separate Under 13 Experience)

In 2024, TikTok (25%) was the #4 platform where CSAM offenders attempted to establish contact with a child, following Instagram (45%), Facebook (30%), and Discord (26%).

A 2025 survey in the UK found, among children exposed to pornography online, 22% saw it on TikTok. Among those who had seen AI-generated pornography, 17% reported seeing it on TikTok.

Resources

Report suspected child sexual exploitation to the National Center on Missing and Exploited Children (NCMEC) Cyber Tipline

NCMEC’s Take It Down service: Resource for minors to remove their sexually explicit content from online platforms

Collective Shout has on-going grassroots campaigns regarding TikTok: check here for updates

Stop Non-Consensual Intimate Image Abuse (StopNCII) – Resource for adults to remove image-based sexual abuse from online platforms

Common Sense Media: Parents’ Guide to TikTok

Recommended Reading

CNN:

Videos of sexually suggestive, AI-generated children are racking up millions of likes on TikTok, study finds

The Information:

TikTok Working on Photo Messaging Feature Despite Employee Concerns Over Sextortion

ABC 7 Little Rock

High schooler faces over 300 felony charges in alleged sextortion, catfishing scheme

Updates

Videos

Playlist

2:06

2:06

Share!

Help educate others and demand change by sharing this on social media or via email!

Spread the word to hold Big Tech accountable. Use these free resources to post on social media or share via email. Your voice can create change!