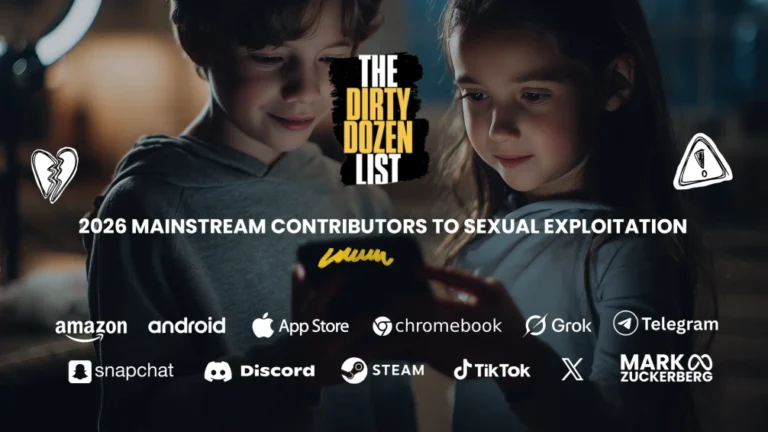

Failing The Kids It Claims To Protect

Why is the Apple App Store on the 2026 Dirty Dozen List?

The Apple App Store lulls parents with “kid-safe” labels while exposing children to hidden online dangers. Deepfake “nudify” tools, stranger connection apps for 13-year-olds, and sex games for preschoolers have all waltzed past Apple’s supposed safeguards—showing just how easily its review system can be gamed. With 87% of U.S. teens on iPhones, Apple is the de facto gatekeeper of childhood online. And until it reins in dangerous apps, fixes deceptive age ratings, and closes loopholes in its parental controls, Apple is failing the very kids it claims to protect.

"Several deepfake-porn apps were able to slip into the Apple App Store by disguising themselves as harmless photo-editing tools."

- NCOSE Researcher

The Problem

Stranger connection apps for 12-year-olds.

Sex games for 4-year-olds.

Platforms known for sextortion and abuse recommended for 13-year-olds.

These are just a few examples of how the Apple App Store has opened the virtual door for children to encounter harms online.

Imagine if toy companies were allowed to sell products labeled “Safe for Toddlers” even though they contained small pieces that could easily choke a child. Parents would trust the label, let their kids play, and be horrified and unprepared when their child begins to choke. That’s exactly what happens in the Apple App Store. Apps are marketed as safe for children, labeled with age ratings, and promoted with friendly descriptions—but behind the surface, some contain hidden risks: exposure to grooming, sextortion, explicit content, or deepfake pornography. Just like a mislabeled toy, the App Store gives parents a false sense of security while kids are left vulnerable to harm.

Apple is a cultural powerhouse, a global icon, and the ultimate gatekeeper to the digital lives of 87% of U.S. teens. With its unmatched resources, cutting-edge products, and world-class engineers, Apple has the power to set the gold standard for safety and privacy. Yet, instead of leading the charge to protect its youngest users, Apple has fallen alarmingly short, allowing harmful apps, deceptive age ratings, and inadequate parental controls to put millions of children at risk—all while raking in billions from in-app purchases.

The Apple App Store is not only marketing harmful apps to children, it provides burdensome parental controls. In fact, the controls are so complex and take so long to set up that an IT expert even sells a $40 guide that has 82 pages and 330 screen shots and teaches how to set up Apple parental controls in 2 hours. And even then loopholes can persist.

Further, there are even serious concerns that it’s violating children online privacy laws. And while Apple has made important improvements to their devices, like turning on default web filters and blurring explicit content for children 17 years old and younger, Apple abandoned plans to detect child sexual abuse material (CSAM) on iCloud, a move that 90% of Americans believe was a critical responsibility.

At various times, the App Store has even been caught hosting deepfake “nudify” apps that create sexually explicit images of non-consenting people (i.e. image-based sexual abuse). Investigators found that several deepfake-porn apps—including PicX, Tapart, MatureAI, and Artifusion—were able to slip into the Apple App Store by disguising themselves as harmless photo-editing tools. Although Apple’s guidelines explicitly ban offensive or sexually exploitative content, the developers avoided detection by using innocuous descriptions in the App Store while advertising the apps’ real “remove clothes” features on social media. Apple removed the apps after journalists exposed them, showing just how easily Apple’s review system can miss dangerous apps and how simple it is for harmful AI tools to slip through the cracks.

Since being named to the 2023 Dirty Dozen List, the App Store continues to market harmful apps like Snapchat and Roblox to minors, exposing them to grooming, sextortion, and explicit content, while its parental controls remain riddled with flaws and loopholes. As the architect of the digital ecosystem for millions of children, Apple has the tools and influence to lead the fight against exploitation—but instead, it has chosen the easy way out, to leave countless young people vulnerable to harm.

Examples of Harmful Apple App Store Ratings

On mobile devices, scroll down to see additional tabs. Best experience will be on a laptop or desktop screen.

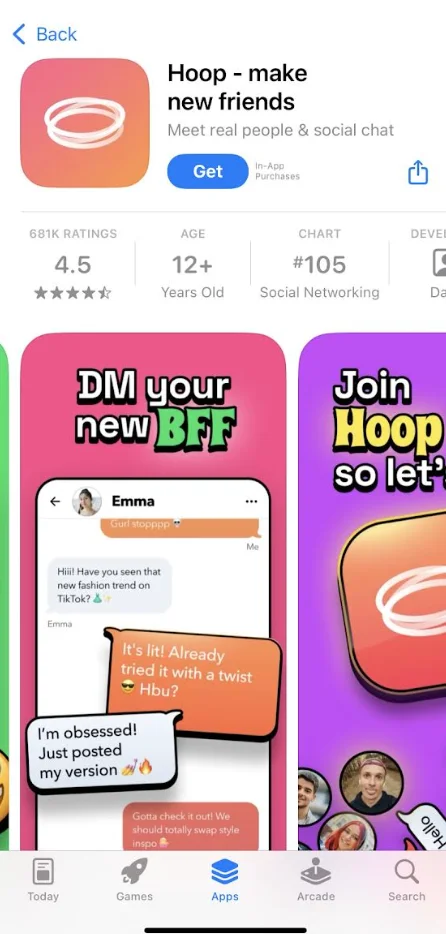

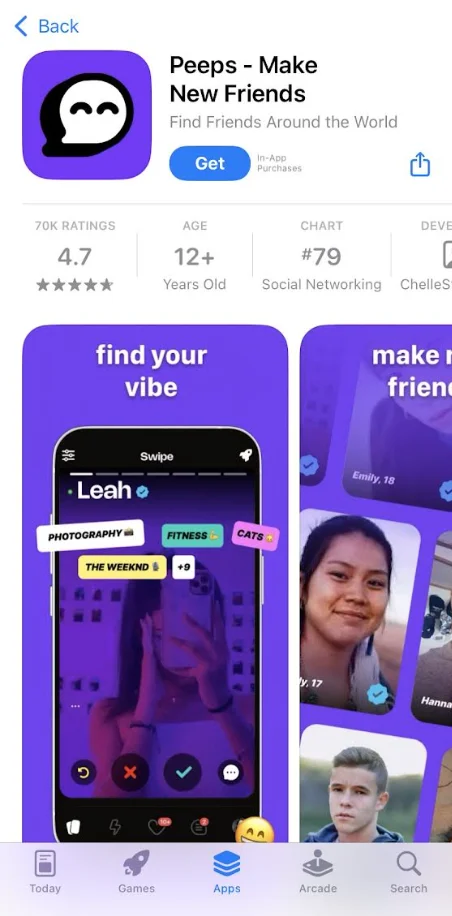

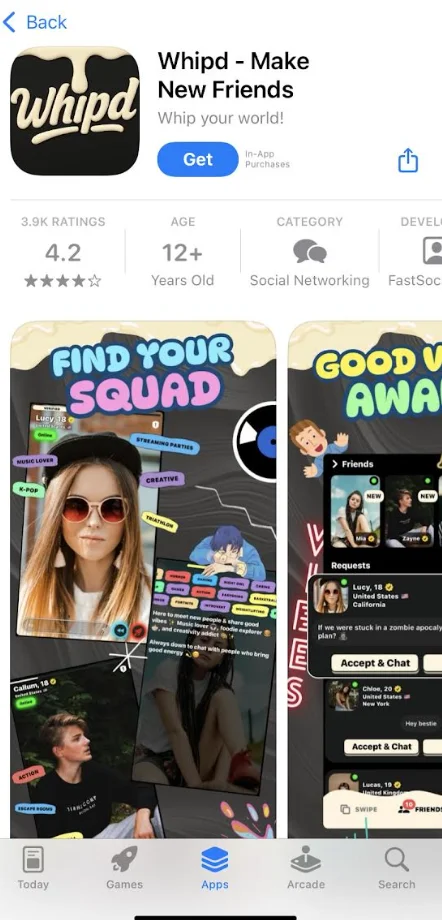

Apps designed to connect minors with strangers create direct pathways for grooming and abuse. Apps like Peep, Hoop, and Whipd are marketed and accessible for children 12+ years old on the Apple App Store.

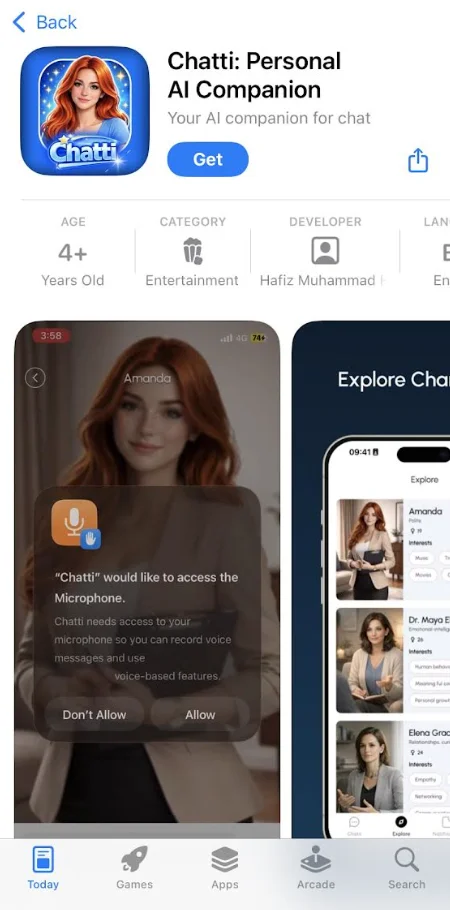

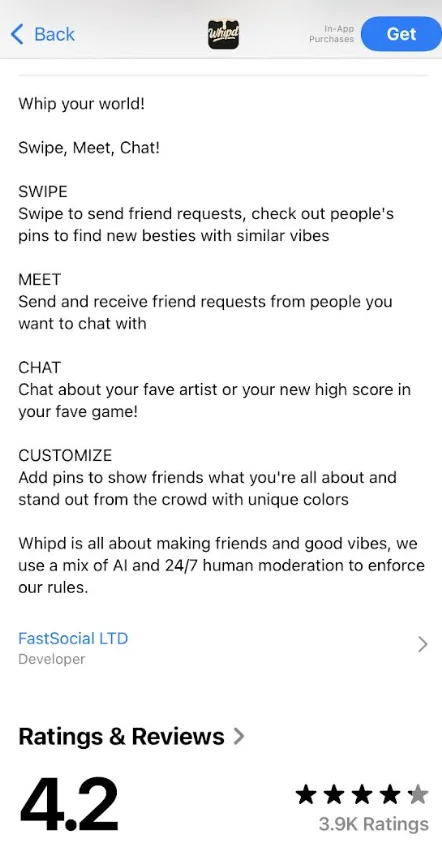

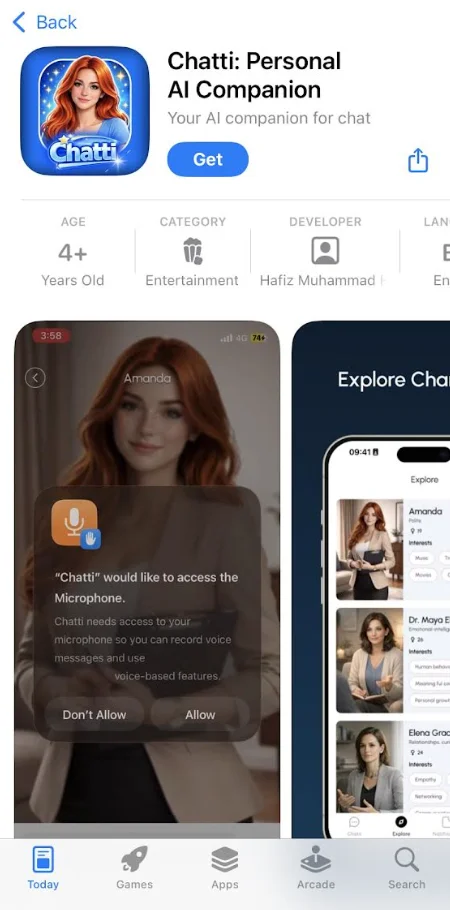

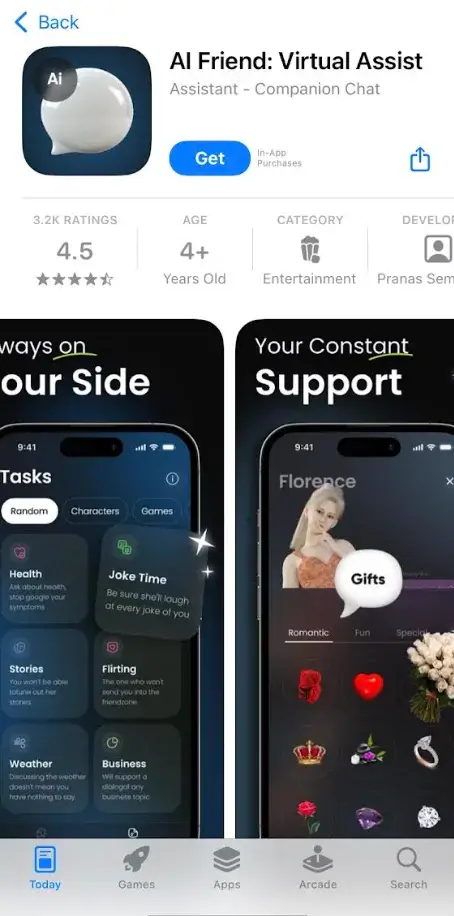

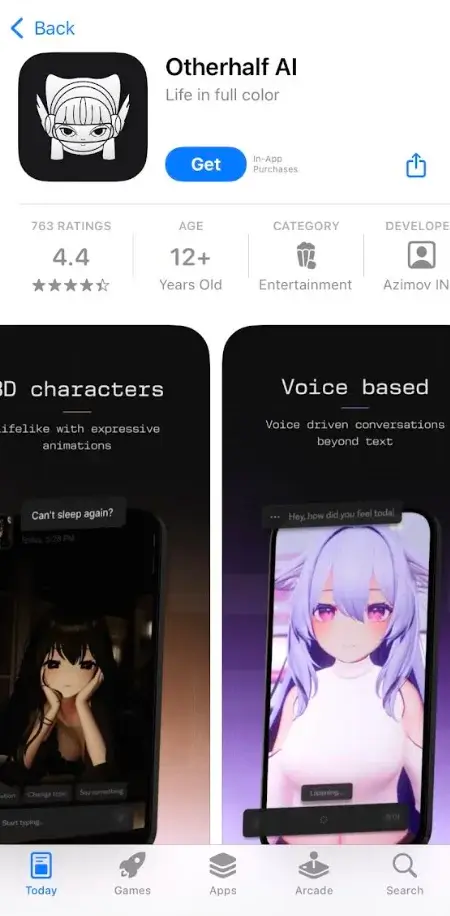

AI chat companions can harm children by fostering intense emotional dependence, with real-world lawsuits alleging bots sexually abused/harassed minors, encouraged self-harm or suicide, and contributed to teen deaths. Apps like AI Friend (4+), Chatti (4+), and Otherhalf AI (12+) are marketed and accessible for children on the Apple App Store.

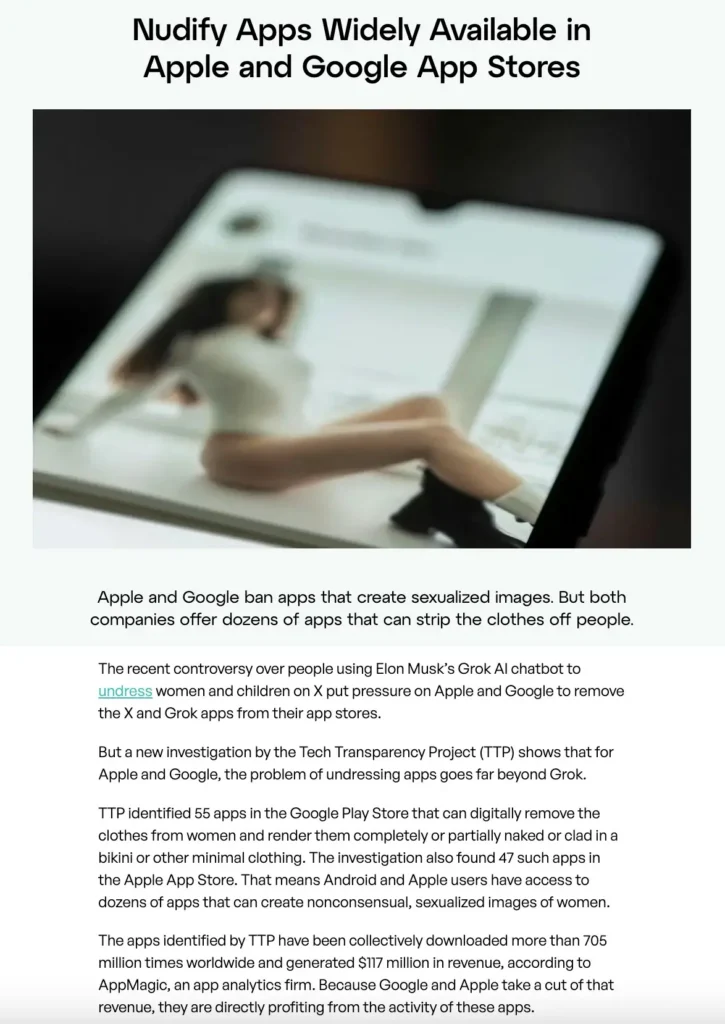

In January 2026, the Tech Transparency Project identified 47 apps on the Apple App Store that could “digitally remove the clothes from women and render them completely or partially naked or clad in a bikini or other minimal clothing.” Some of these apps were even rated for ages 9+ in the Apple App Store.

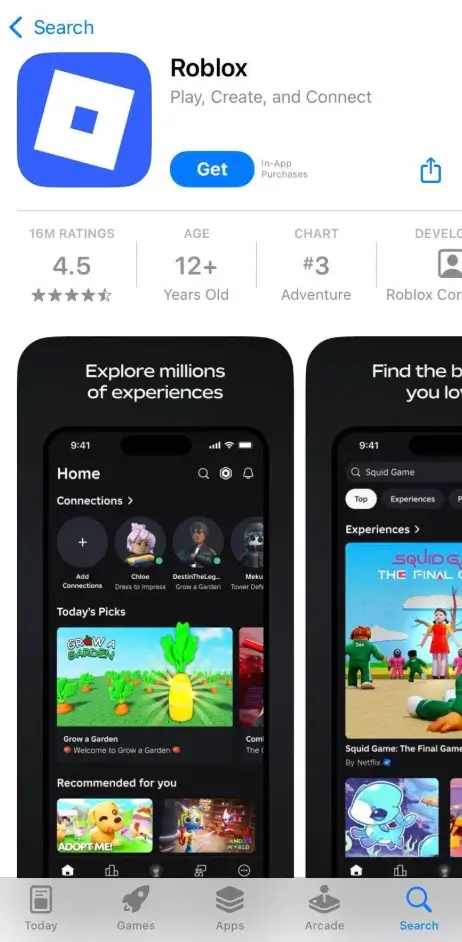

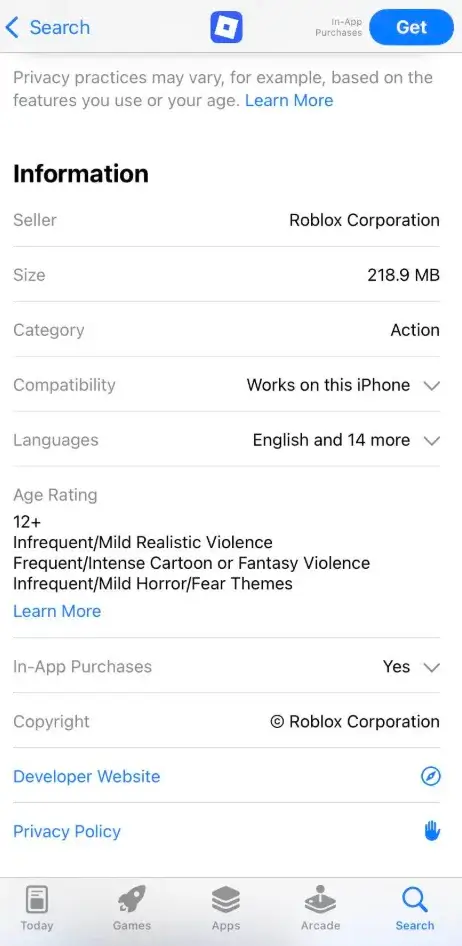

Rated 12+ despite predator grooming via chats, exposure to explicit content, sextortion, and real-world abductions or assaults. Learn More About Roblox

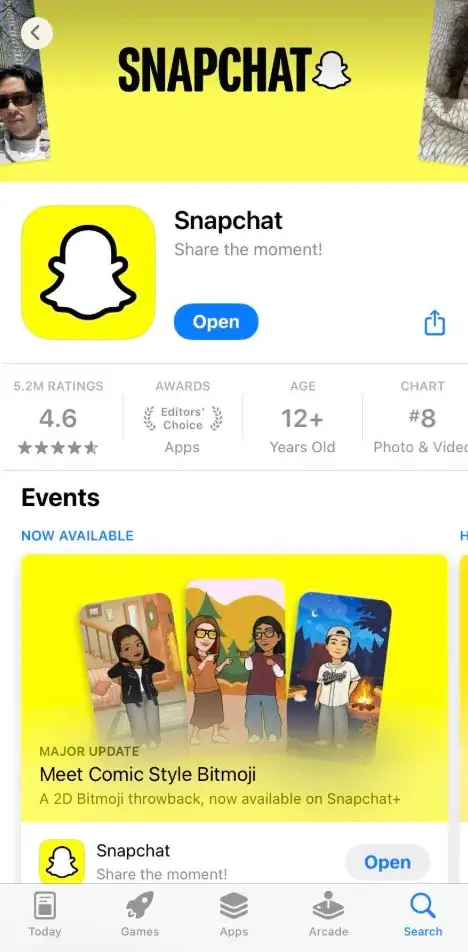

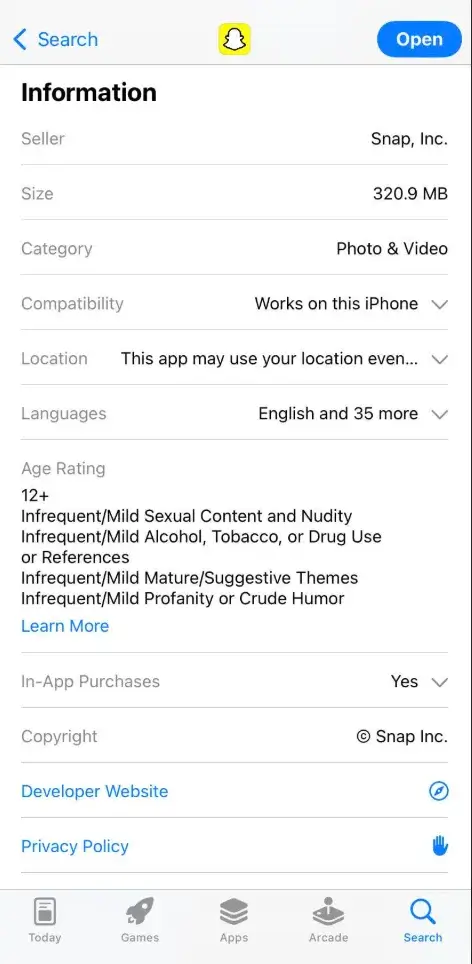

Rated 12+ and awarded an “Editor’s Choice Award” despite widespread grooming, sextortion, sex trafficking, and CSAM sharing. Learn More About Snapchat

Rated 12+ despite consistently being ranked a leading platform for sextortion, grooming, and severe sexual content. Learn more about Instagram and other Meta products

Proof: Evidence of Exploitation

WARNING: Any pornographic images have been blurred, but are still suggestive. There may also be graphic text descriptions shown in these sections. POSSIBLE TRIGGER.

Marketing harmful apps to children, from sex games to dangerous social platforms

Apple has been accused of marketing apps with harmful content to children by assigning misleading age ratings.

For example, the Canadian Centre for Child Protection found that Apple had rated kink and hookup apps as appropriate for 11-year-olds, even though the developers of these apps state in their terms of service that users under 17 are not permitted. Within 24 hours, Heat Initiative and ParentsTogether Action found more than 24 sexual games rated as appropriate for kids as young as 4 years old. Many of these apps openly advertised their sexual nature in the title or descriptions.

Further, Apple fails to accurately portray the risk that more mainstream social media apps pose to children. For example, Instagram and Snapchat, both rated 13+ in the App Store, expose minors to sexual predators, explicit content, harmful social media challenges, and myriad other dangers. Snapchat, for instance, has been linked to sextortion cases and drug trafficking, yet Apple’s age rating fails to reflect these dangers. The risk disclosures that accompany the age ratings neglect to mention anything about sextortion or sexual exploitation, even though Snapchat has been reported to intake 10,000 reported incidents of sextortion every month.

Screenshots below collected December 2025

Similarly, Roblox, a game rated 13+, includes user-generated pornography, anonymous chat features, and violent roleplay, which investigators have described as an “X-rated pedophile hellscape.” Cases of predators grooming and abusing children on Roblox make headline news regularly—yet Apple’s risk disclosure only mentions “infrequent/mild cartoon or fantasy violence” and “infrequent/mild realistic violence.”

Screenshots below collected December 2025

Apple’s age ratings are simply based on the information app developers voluntarily disclose. This stands in contrast to other industries, such as the video game, television, or movie industry, which rely on independent review boards to assign age ratings, thereby ensuring an objective and uniform standard. Apple’s system, on the other hand, results in highly subjective, inconsistent, and often dishonest ratings as app developers are incentivized to minimize the risks of their products in order to make more money from a broader user base.

Apple Parental Control Failures

Apple’s parental controls, marketed as tools to help parents safeguard their children’s online experiences, have been widely criticized for being ineffective, overly complex, and riddled with loopholes. For instance, the “Ask to Buy” feature, which is supposed to notify parents when their child requests to download an app or make a purchase, frequently fails to send notifications, leaving children unsupervised in their app usage. On one community thread that raised this problem, 30,000 parents upvoted that they were experiencing the same issue. Many parents have reported that this feature is so unreliable that they’ve had to disable it entirely. Similarly, Apple’s Screen Time controls, which are designed to limit app usage and restrict access to adult content, require over 20 steps to configure properly. Even when set up, these controls are easily bypassed by children, who can exploit backdoors in apps like the Bible app, which provides unfiltered web access. These flaws not only undermine the trust parents place in Apple’s tools but also leave children exposed to harmful content and risks.

Adding to the problem, Apple’s parental controls rely heavily on the App Store’s age ratings, which are often misleading and inaccurate. In other words, if an app is incorrectly rated as appropriate for children, it may not be blocked by parental controls. For example, apps like Roblox, rated 13+, include anonymous chat features, user-generated pornography, and violent roleplay, yet these risks are not disclosed in the app’s rating. Similarly, Snapchat, also rated 13+, has been linked to sextortion cases, drug trafficking, and sexually explicit content, but Apple’s parental controls fail to block these apps effectively. Even when parents attempt to use Apple’s tools to restrict access, the system’s glitches and poor design make it difficult to enforce meaningful protections. In one case, a mother discovered that her 11-year-old son was exposed to a sexually explicit in-game ad despite her having paid to remove ads from the app. These persistent failures highlight Apple’s inability—or unwillingness—to provide parents with reliable tools to protect their children in an increasingly dangerous digital landscape.

To add insult to injury, Apple appears to have actively sought to suppress more effective third-party parental controls. For example, a 2019 New York Times article found that Apple removed or restricted 11 of the 17 most downloaded third-party parental control or screen time control apps.

Apple Still Refuses to Scan for CSAM

In December 2022, Apple stopped its plans to proactively scan for CSAM material. [While this matter goes beyond the app store itself, it is important to note.]

Apple effectively turned its back on survivors of child sexual abuse, leaving them to endure not only the initial, unimaginable trauma of the abuse but also the ongoing torment of knowing that the crime scene footage—i.e. recordings of their abuse—continues to circulate on Apple’s digital storage platforms. Each time these images or videos are viewed, survivors are re-victimized, forced to relive their abuse.

West Virginia’s Attorney General, John B. McCuskey, filed a consumer protection lawsuit against Apple on February 19, 2026, in state court. This is described as the first-of-its-kind lawsuit by a state or federal government agency against Apple specifically over its handling of child sexual abuse material (CSAM) on iCloud.

Key Allegations in the Lawsuit

- Apple knowingly allows iCloud to serve as a platform for storing and sharing CSAM by refusing to deploy effective detection tools (e.g., scanning photos/videos for known abusive content).

- The company prioritizes its privacy branding and business interests over child safety, using privacy as a “cloak” to avoid responsibilities to children and families.

- Apple’s iCloud “reduces friction” for abusers by enabling easy access, search, and cross-device sharing of illegal material.

- Apple reports far fewer suspected CSAM instances to the National Center for Missing & Exploited Children (NCMEC) compared to peers (e.g., only 267 reports in 2023 vs. millions from Google/Meta).

- Internal evidence cited includes a 2020 executive text suggesting Apple’s privacy focus made it the “greatest platform for distributing child porn.”

- Apple abandoned its 2021 plan for NeuralHash (on-device CSAM scanning) due to privacy backlash, opting for less robust alternatives like Communication Safety (nudity warnings/blurring in Messages, etc.), which the suit calls inadequate compared to tools like Microsoft’s PhotoDNA used by other companies.

FTC Complaint Against Apple for Violating the Children’s Online Privacy Protection Act (COPPA)

The Digital Childhood Institute submitted a complaint to the Federal Trade Commission in August 2025, asserting that Apple’s parental control system constitutes unfair and deceptive practices. This filing aligned with the FTC’s recent focus on youth protection, following its summer event, “The Attention Economy: How Big Tech Firms Exploit Children and Hurt Families.”

In its complaint, the Institute—an organization made up of child-safety advocates—alleges five violations spanning Section 5 of the FTC Act, the Children’s Online Privacy Protection Act (COPPA), and Apple’s 2014 FTC consent order related to in-app purchases, including:

- “Knowingly Marketing Harmful or Age-Restricted Apps as Safe for Kids: Apple falsely advertises and distributes apps with adult, violent, and sexually explicit content as safe for minors. It also approves lower age ratings than those required by the apps’ own terms of service or privacy policies. It does so despite knowing that the age ratings are inaccurate, misleading, and directly expose children to serious harm. Apple controls and approves the app age ratings, amplifies and monetizes apps with known false ratings, and profits from every in-app purchase. This conduct violates Section 5 of the FTC Act.”

- “Other Deceptive Safety Claims and the Failure of Apple’s Parental Controls: Apple markets its App Store as a safe environment for children, with curated content, reliable age ratings, and effective parental controls. These claims are misleading. Apple’s rating system produces deceptive results; the parental controls often have bugs or are easily bypassed; and Apple has a history of blocking more effective third-party safety tools while promoting its own flawed system. Together, these practices give families a false sense of security and constitute deceptive conduct under Section 5 of the FTC Act.”

- “Unfair Trade Practices Involving Exploitative Contracting with Minors: Apple knowingly facilitates unfair digital contracts between vulnerable children and app developers. These clickwrap agreements (or contractual terms of service) that a minor is obligated to agree to as part of the download contain arbitration clauses and exploitive data licenses that allow the developer access to highly sensitive information such as the minor’s location data, contact lists, photos, camera, and microphone. Apple facilitates a user’s entry into such contracts even when it knows the user is a minor and not legally permitted to enter into such complex, binding contracts. Further, Apple often excludes parents from this contracting process, giving parents no reasonable opportunity to protect their vulnerable children from such one-sided contracts with powerful tech companies. Apple’s conduct is unfair under Section 5 of the FTC Act.”

- “Widespread Violations of the Children’s Online Privacy Protections Act (COPPA): Apple knowingly enables app developers to collect personal data from children under 13 without parental consent. Apple has actual knowledge of the user’s age, yet withholds this information from developers, granting them plausible deniability while facilitating unlawful data extraction. Apple also conditions a child’s participation in its data collection regime by offering so-called “freemium” gaming apps. This conduct violates COPPA and constitutes both unfair and deceptive practices in violation of 16 CFR §312.9 and Section 5 of the FTC Act.”

- “Violation of the 2014 FTC Consent Decree on In-App Purchases: Apple continues to bill accounts for in-app purchases made by minors without obtaining express, informed parental consent, as required by the 2014 FTC consent decree.55 This is a direct violation of a federal order. Apple allows parents to disable consent tools like “Ask to Buy,” even for very young children, including preschool-aged children, and does not require users over the age of 13 to be linked to a parent account to allow for parental consent.”

Requests for Improvement

Employ independent, third-party review to assign age ratings and craft risk disclosures to accurately and directly state features that put minors and/or adults at risk for any harms.

All parental consent request messages should clearly disclose these risks prior to approval for downloading/purchasing within the app store and allow parents to report incorrectly rated apps.

Prioritize scanning for discrepancies between stated age rating and user experience.

Implement 18+ age verification to restrict children from accessing apps with features that put them at risk for sexual abuse or exploitation, including dating / hookup / stranger connection apps, AI companion chatbots, and apps that host obscene content like Bluesky, Reddit, and X.

Ensure parental controls are effective and intuitive; fix known bugs such as the unreliability of the “Ask to Buy” feature. Ask to Buy should be permanently enabled for all users under 18 because each app download involves agreeing to a new and complex terms-of-service contract, and FTC consent orders beginning in 2014 require explicit, informed parental consent for minors’ in-app purchases.

Fast Facts

87% of U.S. teens own an iPhone

74% of parents support requiring the age ratings on apps to be determined by an independent expert review process rather than by device and app makers.

In a sample of young people who had created AI-generated pornography or AIG-CSAM of another person, 70% said they downloaded the app they used to make deepfakes from their device’s app store (e.g., Apple’s App Store or Google’s Play Store).

Apple’s annual revenue as of September 2025 was $416 billion, with a gross profit of $195 billion.

Within 24 hours, Heat Initiative and ParentsTogether Action found more than 24 sexual games rated as appropriate for kids as young as 4.

Resources

Learn about the App Store Accountability Act

The App Danger Project: Check user reviews from app stores that raise concerns about dangerous experiences for children

Apple’s Age Ratings Definitions

Recommended Reading

Orrick:

Texas and Louisiana Join Growing Trend of State Age Verification Laws for App Stores

Wall Street Journal

Tim Cook Called Texas Governor to Stop Online Child-Safety Legislation

Heat Initiative & Parents Together:

REPORT: Rotten Ratings - 24 Hours in Apple's App Store

Updates

Videos

Playlist

30:42

0:27

2:28

Share!

Help educate others and demand change by sharing this on social media or via email!

Spread the word to hold Big Tech accountable. Use these free resources to post on social media or share via email. Your voice can create change!