From Gaming Chat to Grooming Pipeline

Why is Discord on the 2026 Dirty Dozen List?

Discord is an online destination for exploitation, and even organized criminal sextortion networks have noticed. Discord’s design choices—private channels, limited default safeguards, and reactive enforcement—undermine its own “safety rules,” allowing serious harms like sextortion, grooming, CSAM distribution, and image-based sexual abuse to flourish. It’s time for them to take prevention seriously.

"By its very design Discord allows high-risk on-ramps for exploitation and policy enforcement is often reactive and lax."

- NCOSE Researcher

The Problem

Discord is a pipeline for grooming → coercion → and sextortion

What started as a communication platform for gamers has become a vastly popular app for direct messaging, small group chats, and larger server communities, complete with text, voice, video, and screen sharing.

Discord is no stranger to the Dirty Dozen List, in fact this year marks its 5th time being named a mainstream contributor to sexual exploitation.

Sexual abusers return to Discord again and again, thanks to this company’s reputation for lax rule enforcement and dangerous design. Even registered sex offenders have been charged for targeting kids on Discord. They use its DMs, video calls, or small servers to gradually escalate conversations, often requesting sexual content which can then be used to coerce or blackmail (aka sextortion) children into further sexual abuse.

Often abusers initially meet minors on other social media or videogame sites (like Roblox or Snapchat) and then direct them to connect on Discord where further grooming and escalation take place.

Why is Discord an online destination for exploitation? Because by its very design Discord allows high-risk on-ramps for exploitation and policy enforcement is often reactive and lax. Several Discord users have reported to NCOSE that the platform places too much responsibility on its users to moderate and report harmful activity. This approach can create an environment where exploiters easily connect and share abusive material, knowing they are unlikely to be reported by others with similar intentions.

In fact, Discord is SUCH a reliable platform for abusers that organized sextortion criminal networks like 764 have been documented to use Discord to share tactics, recruit victims, and coordinate sextortion at a larger scale.

Predators also use Discord not only to obtain CSAM from children themselves, but to share and trade CSAM with each other, whether directly, via external links, or invite-only community groups on Discord. Discord has also become a popular platform for posting deepfakes, AI-generated images, and other forms of image-based sexual abuse.

The disturbing truth is none of this is news to Discord.

Despite constant reports and lawsuits about Discord being used for sexual exploitation, and its CEO even being called before lawmakers to testify about this problem, the problems persist.

Discord still does not default safety features to the highest possible setting for teens. In fact, despite promising a global rollout of “teen‑by‑default” safety settings that would give all users age‑appropriate protections and limit access to risky spaces, Discord postponed this launch. Discord has claimed that a global rollout is coming in late 2026, that it will provide teen safeguards and ensure age verification is conducted with high privacy standards.

But will Discord actually ensure these protections are effective? Or will they water down the protections and settle for a PR stunt?

We are calling on Discord to make good on its promise, and to launch teen-by-default safety settings at best-in-class standards.

Proof: Evidence of Exploitation

WARNING: Any pornographic images have been blurred, but are still suggestive. There may also be graphic text descriptions shown in these sections. POSSIBLE TRIGGER.

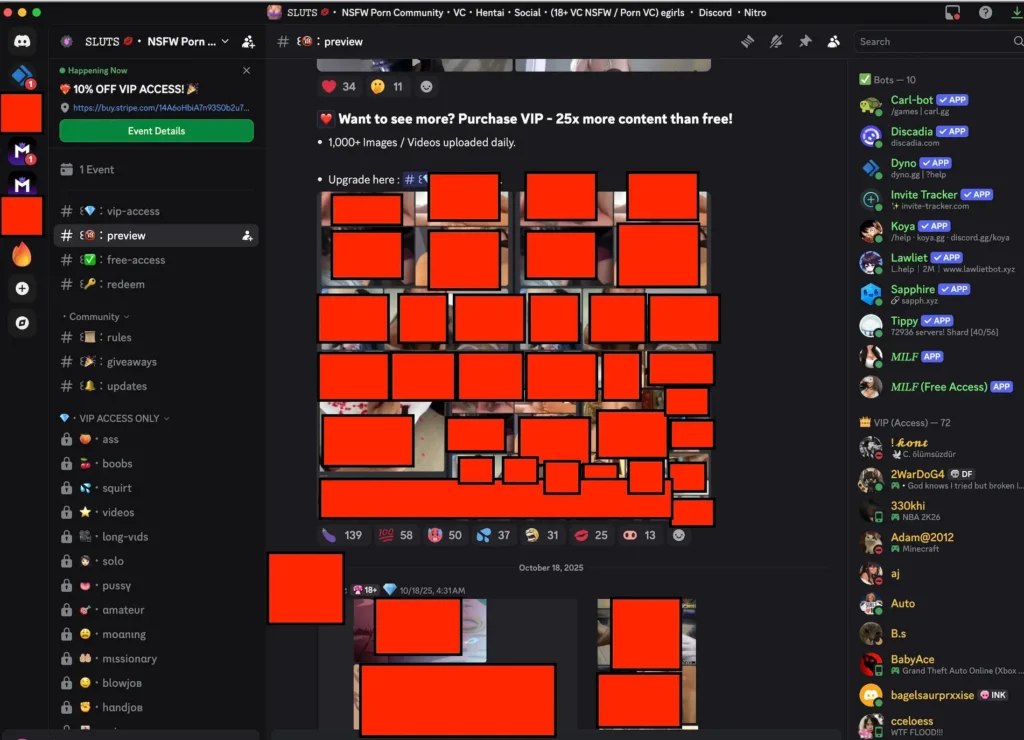

NCOSE Investigation Finds 10-year-old Account Still Exposed to Porn on Discord

A NCOSE investigator set up an account as a 10-year-old child in December 2025. See the report below:

Discord does a very poor job of performing ample age verification and parental consent on new accounts that children will be using on the Discord platform. This is a significant safety concern given that many children use Discord to communicate with one another while playing video games or for other social interactions.

Upon attempting to create a new account on Discord, I discovered that there was no parental or age verification check for a 10-year old child.

Post-account creation, the child is unable to discover NSFW accounts located within the native Discord Discover page, however, if a child simply locates an NSFW discord server through google, they will be able to accept a public invitation to join the Discord server in real time.

Discord’s own policies acknowledge that adult content is supposed to be restricted to users 18+, but in practice, [as of March 2026] access has long relied on self-attested age and simple click-through warnings. Users are merely asked to confirm they are over 18 before entering explicit channels.

Below is a censored screenshot of pornographic imagery on Discord available to a 10-year-old. More evidence is available for press or policymakers.

Grooming and CSAM Sharing

Discord’s Community Guidelines explicitly prohibit sexual interactions involving minors and grooming behavior. Yet the platform’s structure—public servers that funnel into private messages and smaller invite-only spaces—and lack of sufficient prevention and intervention measures creates an environment where grooming can predictably unfold out of sight.

Below is a mere sample of grooming, child sexual abuse, and CSAM sharing cases involving Discord.

- March 13, 2026 — Former Boston Teacher Sentenced to 10 Years in Prison for Child Exploitation

- A former science teacher was sentenced in federal court “for coercing and enticing at least one underage female to engage in sexual conversations online and requesting that she produce and send child sexual abuse material (CSAM) of herself… [and he] possessed CSAM depicting rape of both female and male minors, ranging in age from approximately five to 17 years old… [on Discord he] messaged at least 20 underage females between the ages of 12 and 17 years old located throughout the country, including Georgia, Texas, Tennessee, West Virginia, North Carolina and Florida, as well as the United Kingdom and Canada. In these chats, Gavin disclosed that he was a teacher, engaged in sexual conversations and often asked the minors to send him pictures of themselves engaged in sexually explicit conduct – knowing that the children were underaged.”

- March 10, 2026 — FBI: Raleigh man sexually exploited teen, abused baby on video after meeting online

- Federal authorities charged a Raleigh, North Carolina man who allegedly met an underage girl on Discord and other platforms, using those communications to coerce explicit images and make plans for abuse; court filings also allege he recorded the sexual abuse of an infant. It was reported this man: “made plans to drive to a 13-year-old girl’s home in Nebraska, pick her up, and bring her to North Carolina to sexually abuse her. Swenson, 42, met the girl on Zangi and Discord and convinced her to send images of herself.”

- February 20, 2026 — Monroe County man learns sentence after sexually exploiting children using Roblox, Discord, Snapchat

- A Michigan man was sentenced to at least 16 years in federal prison after pleading guilty to sexual exploitation of children and related charges; prosecutors said he used Discord (along with Roblox and Snapchat) to communicate with and sexually exploit minors by coercing them into producing explicit content and distributing their images.

- January 31, 2025 — Man accused of internet child sex crimes allegedly met victims on Roblox, Discord

- A 20‑year‑old Baton Rouge man was arrested on multiple child sex crime charges after investigators determined he coerced a 14‑year‑old girl to send “inappropriate photos” via Discord after initially contacting her on Roblox. Deputies also linked him to another extortion case involving a 15‑year‑old, and law enforcement found child sexual abuse material on his Discord account during the investigation.

- September 15, 2025 — California man sexually exploited a dozen girls on Discord and sentenced

- A 20‑year‑old man from California was sentenced to 14 years in federal prison after pleading guilty to coercion and enticement of minors and CSAM-related charges. Prosecutors said the man used Discord to engage with at least a dozen victims aged 12 to 17, coercing them into sending explicit images and distributing child sexual abuse material, and in some cases maintaining in‑person relationships.

- September 22, 2025 — Marion County deputies arrest man for sexually exploiting children on Discord and X

- In Florida, deputies arrested a 20‑year‑old man accused of possessing and sending child pornography and messaging minors online, with investigators finding hundreds of explicit images involving underage victims on his Discord account. One victim reportedly began receiving messages at age 11.

- October 3, 2025 — Cyber tip leads to Tennessee man’s arrest for sharing child sex abuse material on Discord

- A 41‑year‑old Davidson County man was arrested after the National Center for Missing and Exploited Children issued a cybertip report about an account on Discord that had uploaded more than 30 CSAM files. Law enforcement identified the account as this man’s, and he was charged with aggravated sexual exploitation of a minor and failing to register as a sex offender following the search and seizure of bond‑related evidence.

New Jersey and Florida State Attorney Generals Taking Action Against Discord

March 2026: Florida Launches Civil Investigation into Discord over Child Safety Concerns

In March, 2026, Florida Attorney General James Uthmeier announced his office launched a civil investigation into Discord, alleging that the platform has become a place where child predators increasingly use the service to contact and exploit minors.

“We’ve brought investigations into Snapchat, into Roblox, and others,” AG Uthmeier said. “What we’ve learned today is all roads lead to Discord.”

The investigation includes subpoenas seeking internal records about Discord’s marketing to children, age‑verification practices, content moderation, and how the company responds to complaints and exploitation reports, reflecting claims that current safety measures are inadequate. Florida’s AG described Discord as potentially acting as a “safe haven” for predators.

Florida Attorney General James Uthmeier announced Thursday that his office has launched a civil investigation into Discord.🔽https://t.co/OlU1wYjErs pic.twitter.com/0jBZ3W7u2U

— ABC7 Sarasota (@mysuncoast) March 18, 2026

April 2025: New Jersey Sues Discord for Allegedly Failing to Protect Children and Misleading Parents

In April 2025, New Jersey Attorney General Matthew J. Platkin filed a lawsuit against Discord, Inc., in the Superior Court of New Jersey, alleging that it engaged in deceptive, unconscionable, and unlawful business practices that endangered children. The complaint claims Discord misled parents and users about the effectiveness of its safety features, failed to enforce its minimum age restrictions, and exposed minors to violent and sexual content and predators, including by allowing easy access through default settings and weak age verification. The AG’s office asserts these practices violate New Jersey’s consumer fraud laws and seeks civil penalties and injunctions requiring improved safety measures.

“Discord claims that safety is at the core of everything it does, but the truth is, the application is not safe for children. Discord’s deliberate misrepresentation of the application’s safety settings has harmed—and continues to harm—New Jersey’s children, and must stop,” said Cari Fais, Director of the Division of Consumer Affairs.

Image-Based Sexual Abuse

Discord’s rules allow pornography. Yet the company fails to provide any meaningful age or consent verification of people depicted in sexual content to prevent image-based sexual abuse.

We define image-based sexual abuse (IBSA) as the creation, manipulation, theft, extortion, threatened or actual distribution, or any use of sexualized or sexually explicit materials without the meaningful consent of the person/s depicted or for purposes of sexual exploitation. Learn more here.

Discord technically prohibits non-consensual sharing of sexual content. But its enforcement is primarily reactive, depending heavily on users or victims reporting the images after the fact. Which means Discord is primarily waiting until after the harm is done.

It could stop IBSA by either banning pornographic content and enforcing that ban, or by implementing strong age and consent verification systems for each person depicted in sexual content. Instead, it does neither. By permitting largely unrestricted sexual content without meaningful safeguards, Discord enables image-based sexual abuse to flourish without consequence.

Sextortion

Discord’s core features include hidden/private servers, encrypted/end-to-end-like DMs, voice/video calls, and livestreaming with limited proactive oversight. These create isolated spaces where predators can build trust, isolate victims, coerce explicit content, and apply threats (sextortion) without easy detection. Moderation is largely reactive (user-reported) and relies on server owners/moderators, leaving many spaces unmonitored or poorly managed. And Discord’s current DM practices still allow sextortion because strangers can reach minors through shared servers or accepted message requests, and parents are left unaware.

Poorly moderated video calls and livestreaming particularly create vulnerability for sextortion. Many young people post online asking for advice after they’ve experienced sextortion on Discord.

Below is a small sample of sextortion cases involving Discord.

- March 13, 2026 — Man accused of online ‘sextortion’ targeting Somerset child

- A 22-year-old Pennsylvania man was arrested for “sextortion” of a child via Discord and Telegram. He posed as “Ollie” to engage in unlawful online interactions with juveniles across several states, leading to his arrest on a warrant with home search yielding evidence.

- February 20, 2026 — Silver Spring man sentenced to 50 years for child exploitation, including “sextortion” of more than 100 minors

- A man, 28, was sentenced to 50 years in federal prison (plus 25 years supervised release) after pleading guilty to producing child sexual abuse material. He targeted at least 108 girls (as young as 5) worldwide via platforms including Discord, coercing them into sending explicit images/videos with threats to share or harm them.

- December 2, 2025 — Five leaders of ‘Greggy’s Cult’ charged with sexually exploiting children on the internet

- Five men were indicted for allegedly leading “Greggy’s Cult,” a precursor to the 764 network. They used Discord servers to recruit minors (via Discord and gaming platforms like Roblox/CS:GO), coerce them into sexually explicit/degrading acts on video calls, capture recordings for blackmail, extort further content/self-harm, and distribute material.

- September 2025— Roblox, Discord sued over 15-year-old boy’s suicide after alleged sexual abuse online

- The mother of 15-year-old Ethan Dallas filed a wrongful death lawsuit against Roblox and Discord. The suit alleges that her autistic son was groomed starting at age 12 by an adult predator who posed as a peer on Roblox, convinced him to disable parental controls, and then escalated the abuse by moving communications to Discord. There, the predator coerced Ethan into sending explicit photos and videos through threats of sharing the material or exposing him, contributing to severe mental health deterioration and Ethan’s death by suicide in April 2024. The lawsuit claims the platforms recklessly and deceptively operated without adequate safeguards (such as effective age verification, user screening, or moderation).

- May 1, 2025 — U.S. Attorney Charges Newburgh Man For Using Discord Platform To Extort Sexually Explicit Material

- A man was charged in the Southern District of New York for sextortion via Discord, threatening a 17-year-old minor with distributing explicit images/videos unless more were sent.

Ineffective Parental Controls

Discord’s parental controls have significant gaps that make them largely ineffective in practice.

Parents can only see who their teen is chatting with or which servers they join, but they cannot read the actual messages or view shared images, and they can’t remove friends or kick teens out of risky servers. Teens must actively opt in to link a parent via Family Center, and they can disconnect at any time, meaning parents often have no oversight at all.

Combined with easy-to-fake age settings and the platform’s lack of traditional supervision tools like chat logs or screen-time limits, these loopholes mean that, despite the promise of teen safety features, parents often cannot meaningfully protect their children on Discord.

Requests for Improvement

NCOSE recommends that Discord ban minors from using the platform until it is radically transformed.

Prioritize image-based sexual abuse (IBSA) and child sexual abuse material (CSAM) prevention and removal

Prioritize image-based sexual abuse (IBSA) and child sexual abuse material (CSAM) prevention and removal.

How?

By instituting robust age and consent verification for every person depicted in sexually explicit content. If Discord doesn’t want to invest in this safeguarding approach, it should ban and prevent uploading or sharing of pornographic content.

Develop or improve proactive internal flagging and review process for high-risk activity related to sextortion and grooming

Develop or improve proactive internal flagging and review process for high-risk activity related to sextortion and grooming, such as high-volume friend requests, image sharing, grooming language indicators, and more. Collaborate with law enforcement, survivors, and subject matter experts to develop a robust methodology.

Rollout “teen‑by‑default” safety settings with strict age verification standards

Rollout “teen‑by‑default” safety settings with strict age verification standards so that minors accounts are consistently set to the highest level of safety and privacy available, including no access to livestreams, video-calls, or one-to-one direct messaging.

Effectively block minors from joining servers that host sexually explicit content.

Add features to Parental Controls

- Friend requests must be parent approved, especially friend requests from server/group members/individuals with ISP addresses in other countries or states

- Parents should receive elevated notifications when adults request to friend a minor or when a minor requests to friend an adult

- Require parental consent before a child can join any new Discord server. Similar to how app stores can verify a parent’s permission before a child downloads a new app, this step would raise awareness of potential risks and help children set up their accounts safely.

Permanently suspend Pornhub’s verified Discord account.

Fast Facts

Rated 16+ on Apple App Store, rated T (Teen) on Google Play

#4 platform identified by sextortion victims for extortionists communicating threats (behind Snapchat, Instagram, and Facebook Messenger)

28% of U.S. teens have used Discord.

Resources

Report suspected child sexual exploitation to the National Center on Missing and Exploited Children (NCMEC) Cyber Tipline

NCMEC’s Take It Down service: Resource for minors to remove their sexually explicit content from online platforms

Thorn’s Guide to Identify Sextortion: What to do if someone is blackmailing you with nudes

Stop Non-Consensual Intimate Image Abuse (StopNCII) – Resource for adults to remove image-based sexual abuse from online platforms

The Bark Blog: What is Discord and is it safe?

Protect Young Eyes: Discord App Review

Recommended Reading

Fox 13 Tampa Bay:

Florida launches investigation into Discord app claiming its where predators contact kids: ‘This has to stop'

ABC News:

DOJ charges former Navy sailor, 4 others for alleged roles in 'monstrous' online extortion group

Videos

Playlist

34:18

0:33

35:55

Share!

Help educate others and demand change by sharing this on social media or via email!

Spread the word to hold Big Tech accountable. Use these free resources to post on social media or share via email. Your voice can create change!