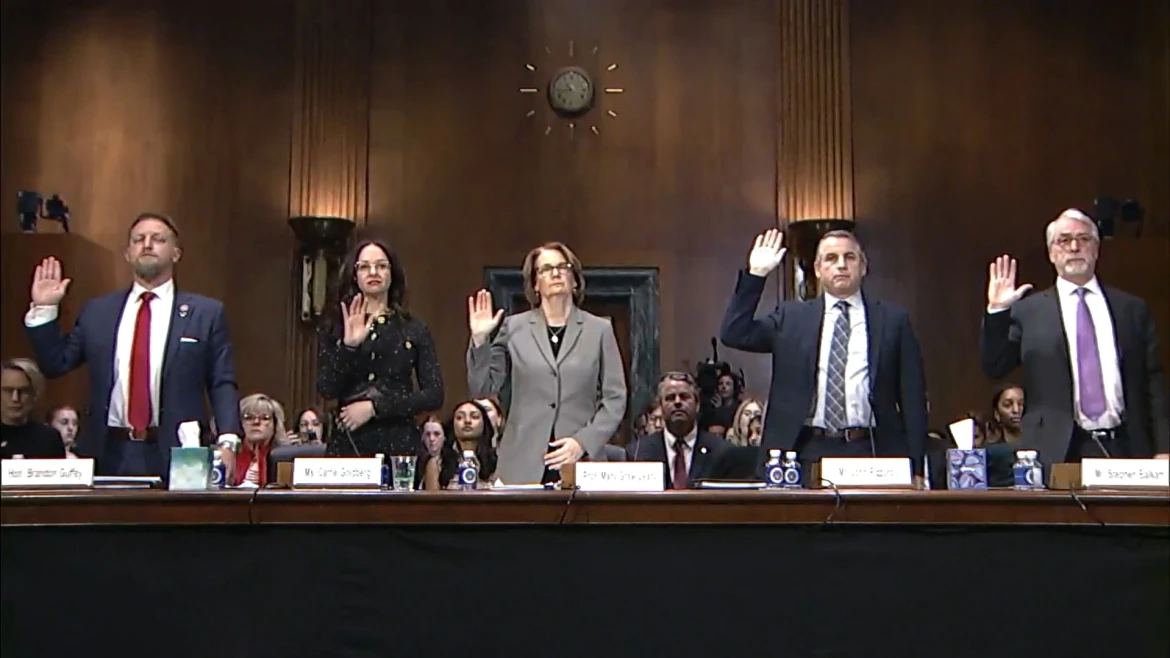

Podcast: Deepfake Technology and A.I. in Sexual Exploitation

“Children being the targets of this deepfake technology is our worst nightmare” Haley and Dani discuss the current state of artificial intelligence and deepfake technology

“Children being the targets of this deepfake technology is our worst nightmare” Haley and Dani discuss the current state of artificial intelligence and deepfake technology

Haley McNamara and Dani Pinter discuss The Guardian article: “‘I didn’t start out wanting to see kids’: are porn algorithms feeding a generation of paedophiles

Haley McNamara (NCOSE Senior VP of Programs and Initiatives) and Dani Pinter (Senior VP and Director at the NCOSE Law Center) talk about Section 230

This year’s Dirty Dozen List has a different look. Read here to learn about its exciting new twist!

Social media’s harms to children are undeniable. But that doesn’t go away when you turn 18. Read for how social media affects today’s young adult women.

Discord is a hotspot for child sexual abuse material and image-based sexual abuse (i.e. deepfake pornography).

Section 230 is the greatest enabler of sexual exploitation in the digital age. It MUST be repealed.

In this special episode of the Ending Sexploitation Podcast, Lisa Thompson (VP of Research at NCOSE) chats with Kristen Jenson about the harms of pornography.

Preventing 21,600 sextortion incidents. Passing laws holding sex buyers accountable. Taking down Pornhub in court. And more!

At 12-years-old, despite her parents’ objections, Chelsea (pseudonym) made an Instagram account, easily fudging her real birthday to meet Instagram’s age requirement. But things quickly

We use cookies

We use necessary cookies to make this site work and, with your consent, analytics and advertising cookies to understand usage and improve marketing. You can accept all, choose necessary only, or reopen your choices later. Privacy policy