13-year-old Juliana thought she found a friend in Character.AI. She chatted with multiple chatbots on the platform, which function as AI “companions” for users.

These conversations started innocent, but quickly veered into dangerous territory.

Instead of providing friendly responses and helpful advice that many of these companions are advertised to do, the conversations turned extremely sexual. Juliana’s lawyer noted that “in any other circumstance and given Juliana’s age, [the conversations] would have resulted in a criminal investigation.”

The lawyer further explained that the bot “severed Juliana’s healthy attachment pathways to family and friends by design, and for market share.”

Juliana confided in the Character.AI bots for weeks about her mental health struggles and when she told it she was going to “write [her] suicide letter in red ink,” the bot failed to discourage this behavior, refer her to emergency services, or even direct her to talk her parents.

It was not long after that she died by suicide.

Juliana’s family is one of several plaintiffs in wrongful death lawsuits against Character.AI. Many other families have lost children at the hands of AI chatbots, including ChatGPT. When children have died because of their interactions with AI companion chatbots, it begs the question: “How is it that we still allow AI companions in the hands of children?”

Thankfully, the GUARD Act, a bill focused on combatting AI harms to children, has just advanced out of the Senate Judiciary Committee! This passage of this bill would be a massive step for AI regulation. Read below for more details.

The GUARD Act in Summary

“This bipartisan bill, introduced by Senators Josh Hawley (R-MO) and Richard Blumenthal (D-CT), would legally require age verification on AI companions and ban children from accessing them. The bill distinguishes between AI chatbots and AI companions, with the latter being defined as any chatbot that “provides adaptive, human-like responses to user inputs” and can simulate emotional interactions, including friendship or companionship. These characteristics have been at the center of several wrongful death lawsuits on behalf of victimized children.

Non-companion chatbots are not required to ban minor users, but they must have some safeguards. For example, the GUARD Act makes it a criminal offense—punishable by fines of up to $250,000—to provide chatbots to users that sexually solicit or exploit minors, or that promote or coerce suicide, self-harm, or physical or sexual violence.

Finally, the GUARD Act states that AI chatbots must notify all users—not just minors—that it is an AI system and not human and cannot “represent, directly or indirectly, that the chatbot is a licensed professional, including a therapist, physician, lawyer, financial advisor, or other professional.” Research has shown AI chatbots giving “medical” advice that harms users, including how to get drunk, the proper dosage for mixing drugs, and encouraging eating disorders by recommending restrictive diets and appetite suppressing medications.”

Why Are AI Companions Uniquely Dangerous?

AI companions are designed to foster human-like connections with users, essentially creating a friend in the form of an AI chatbot. Because the goal of the tech companies is to increase engagement, these AI companions often attempt to replace children’s real-life relationships—therefore isolating them from critical support systems and genuine companionship. As a result, users are suffering negative impacts on their mental health. During adolescence—crucial time for human development—children should be learning how to socialize and develop interpersonal relationships, whereas AI companions often provide the opposite.

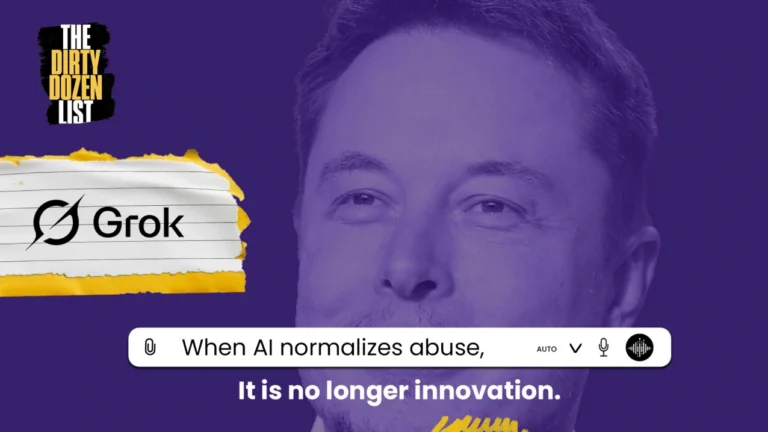

As yet another way of increasing engagement, many AI companions are programmed to engage in sensual or even sexually explicit conversations with users. Even with chatbots that are purported to be designed for kids, like Grok’s Good Rudi, NCOSE experts have been able to bypass their safety regulations and get it to engage in conversations so sexually explicit we cannot even repeat them.

Further, AI companions are typically designed to give constant affirmation to the user. In some cases, children who have expressed suicidal ideation have actually been encouraged to follow through with taking their life by the AI companion.

This is why legislation such as the GUARD Act is vital to stop children from being exposed to the uniquely dangerous features of AI companions.

Ask Your Representatives to Support the GUARD Act and AI LEAD Act!

The GUARD Act is a complementary bill to the AI LEAD Act. While the GUARD Act enacts legal frameworks for how AI can be used, the AI LEAD Act establishes a product liability framework that allows for AI companies to be held liable if their product is not designed with reasonable care in mind.

TAKE ACTION below, asking your legislators to support the GUARD Act and AI LEAD Act!