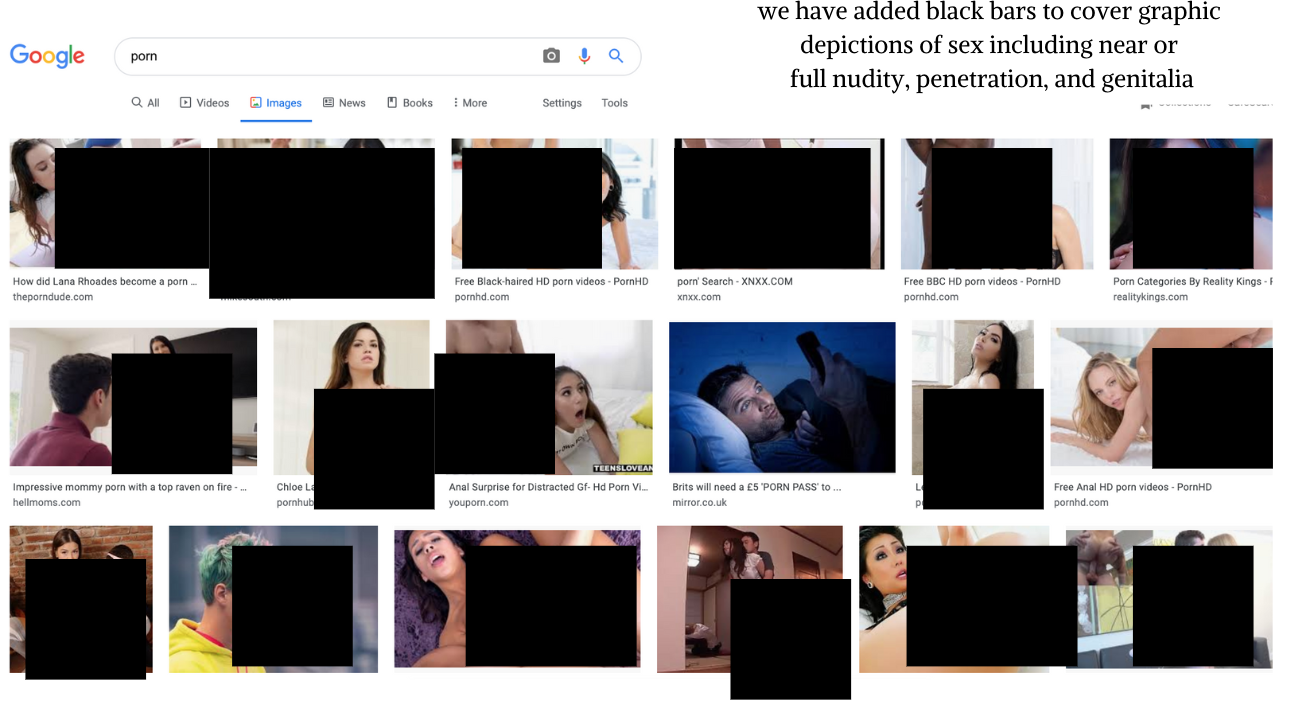

When someone types a keyword into the search bar of Google Images, they don’t expect to see pornography and sexually explicit images on the first page of results.

But unfortunately, despite some steps forward, that’s still happening.

PROGRESS

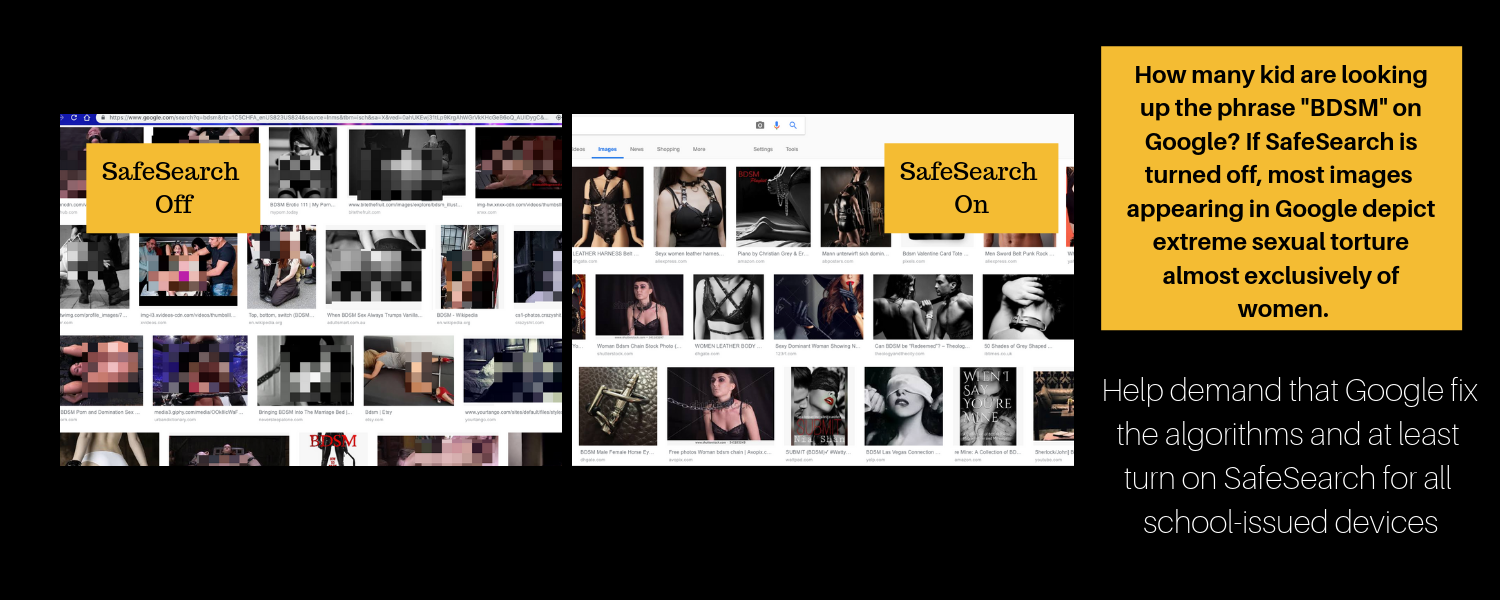

We thank Google for recently making a change we have long asked for–placing the SafeSearch option in a prominent place when in the Google Images tab. It is now in the top right and easier to spot for those who wish to proactively block pornographic images.

We also thank Google for removing pornographic Google Images results for keywords like “happy teen” and “young black teen,” which we requested and discussed with Google executives back in 2019.

This is proof that Google can fix the problem of images pulled in from hardcore pornography websites in Google Images.

CONTINUED PROBLEMS

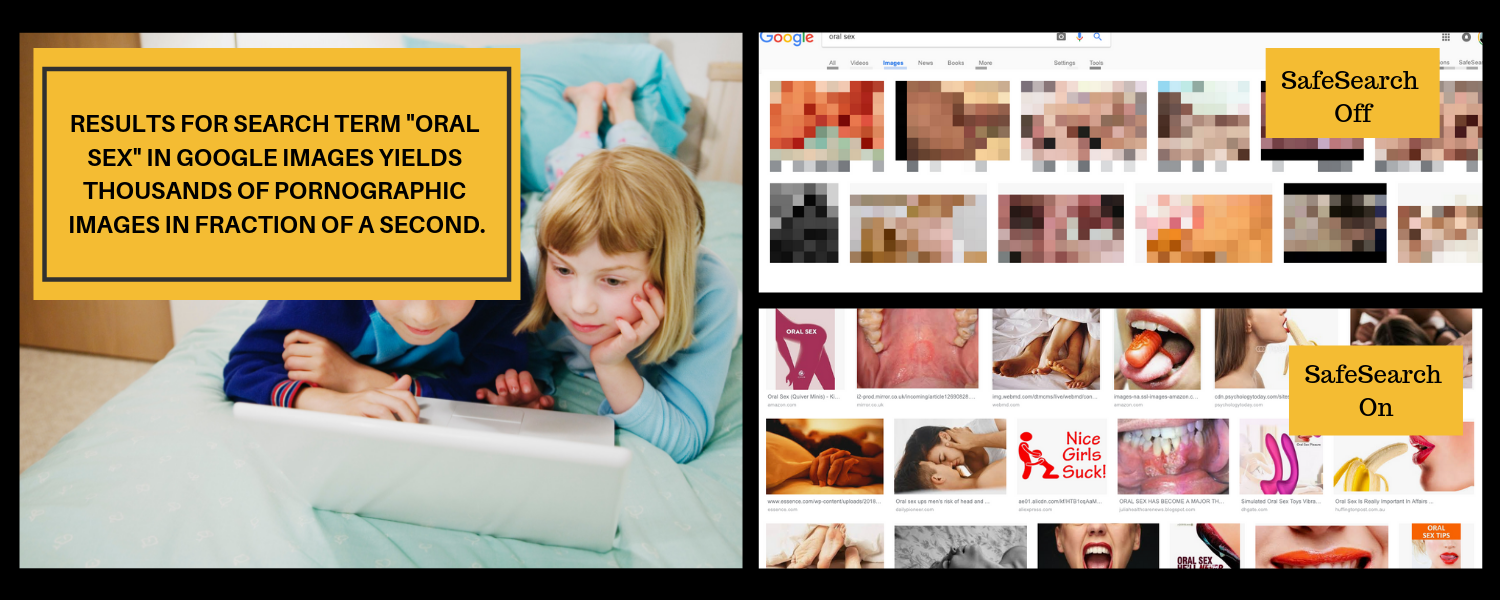

Unfortunately, Google continues to allow pornographic images (many of which link directly to hardcore pornography, often depicting violence against women)  to populate on the first page of results for search terms that should be considered educational. Search terms like “sex” and “oral sex” should direct users to educational information, instead of the current hardcore pornography that populates.

to populate on the first page of results for search terms that should be considered educational. Search terms like “sex” and “oral sex” should direct users to educational information, instead of the current hardcore pornography that populates.

We urge Google to by default turn on SafeSearch for all users, allowing them to turn it off if they want to see such images, or we request that Google fix the algorithm to limit the type and number of pornographic images to populate.

More Proof from 2019:

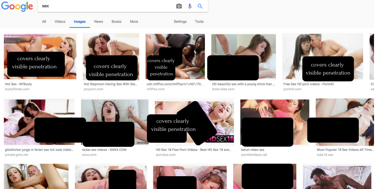

Below, there are screenshots to show exactly what young people are seeing when they type in specific terms into Google Images. We usually wouldn’t include those in an article like this, however, we feel it is necessary for adults to understand the world our young people are living in. Your action is necessary in order to demand a safer online environment and move Google to care about fixing these problems.

Currently, if one types in “sex” with SafeSearch turned off (a thing countless kids are likely doing to learn more about the subject not readily discussed by the adults in their life), you can scroll for a long time and you will not see scientific images of genitalia or reproductive images. Instead, you are met with thousands of hardcore pornography images, where penetration is clearly visible with a focus on the genitalia. A number of the images depict what looks like gangbangs and are coming directly from hardcore pornography websites.

If you search “BDSM”, a term made so popular with the excitement of Fifty Shades of Grey and one surely spiking the curiosity of lots of children, within a fraction of a second Google Images populates with hundreds of thousands of photographs depicting sexual torture.

Similarly, if one types in “oral sex” with SafeSearch turned off, nearly every image depicts sex acts with a focus on the genitalia.

Given the hyper-sexualized world we live in where terms like these are tossed around freely in pop culture, we must think of the impact this has on our young people. In swarms, they are most definitely looking to the Internet for help understanding the world around them and know how to type in terms they wish to know more about. Given the public health impacts of pornography, namely the impact on the growing adolescent brain, Google must be held socially responsible for creating a safer online experience for all users.

Join The National Center on Sexual Exploitation in requesting Google make improvements.